Documentation Index

Fetch the complete documentation index at: https://www.adaline.ai/docs/llms.txt

Use this file to discover all available pages before exploring further.

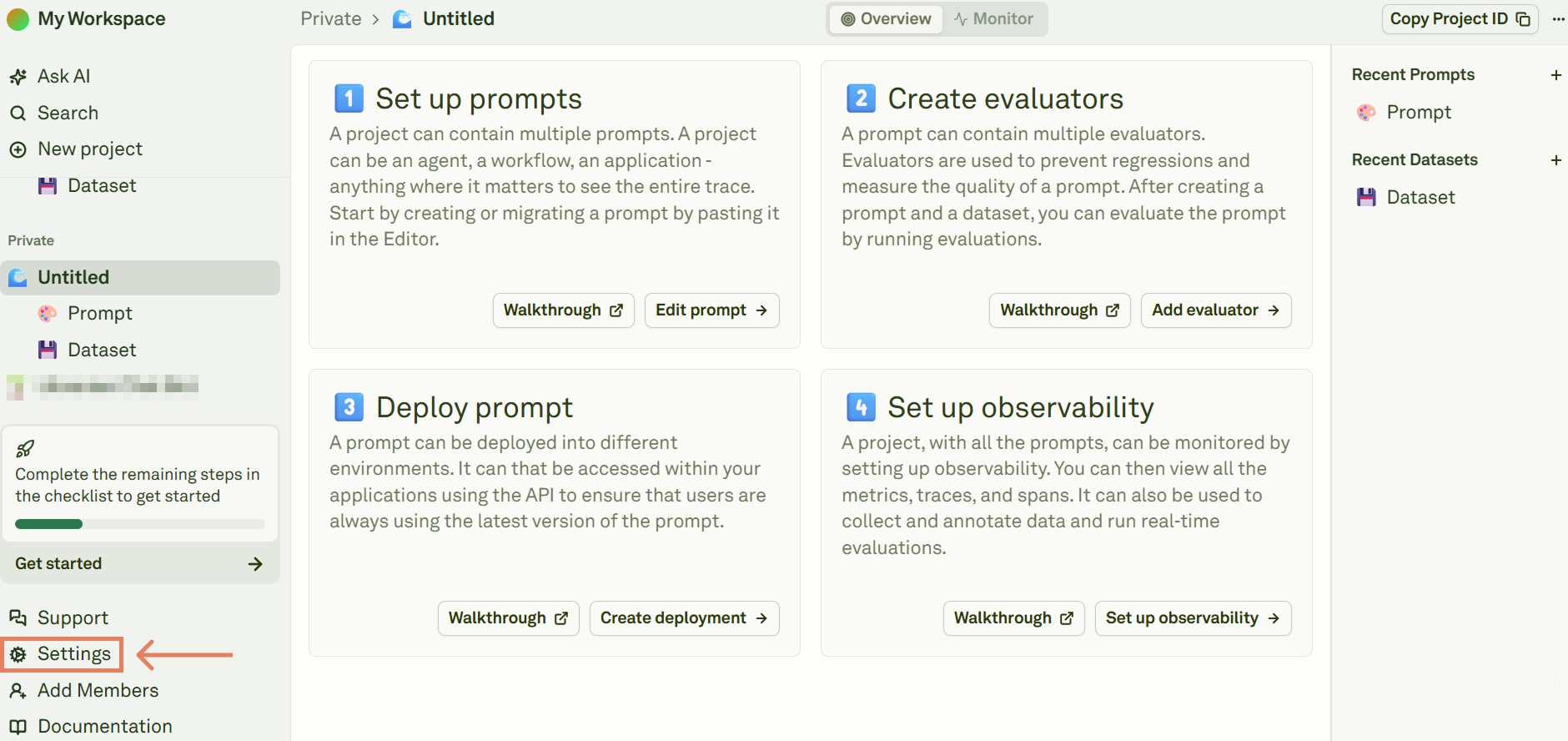

1. Sign up

If you don’t have an Adaline account yet, you can create one by signing up at app.adaline.ai. After creating an account, you will notice the following:- A

Sharedteamspace containing workspace-wide public projects and other entities. - A

Privateteamspace with a sample project, prompt and dataset.

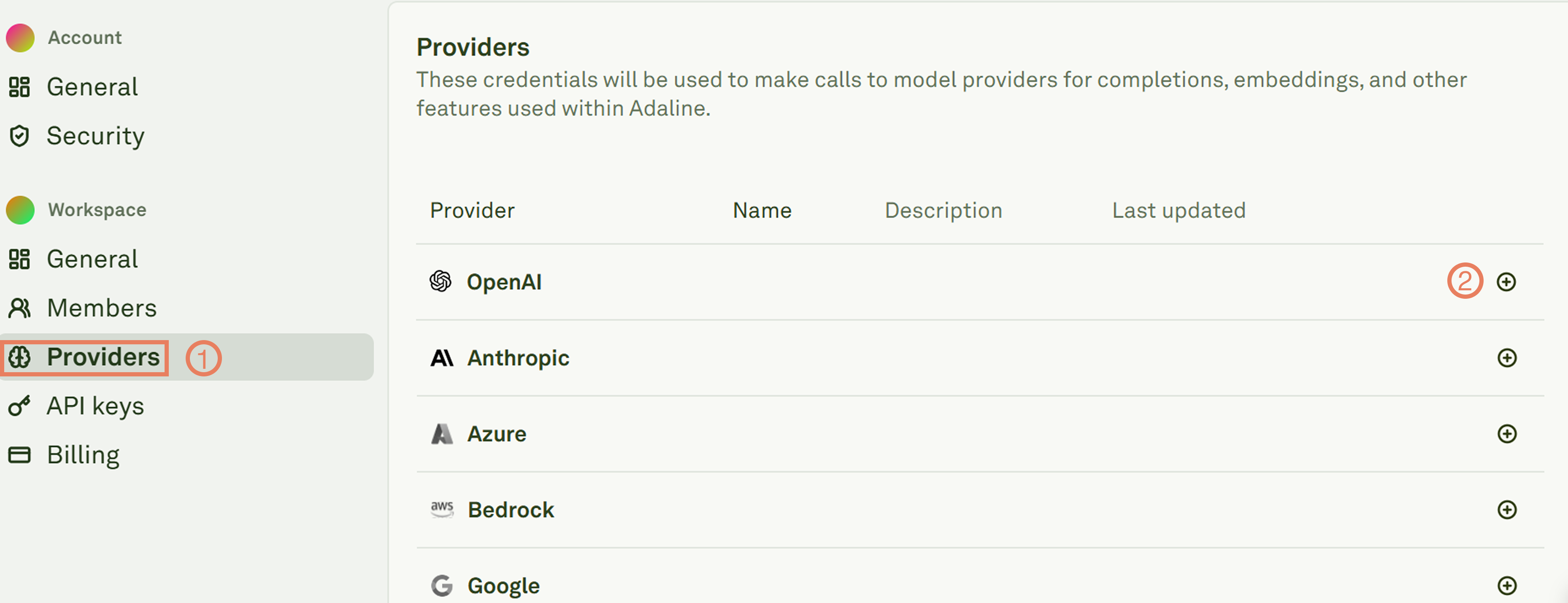

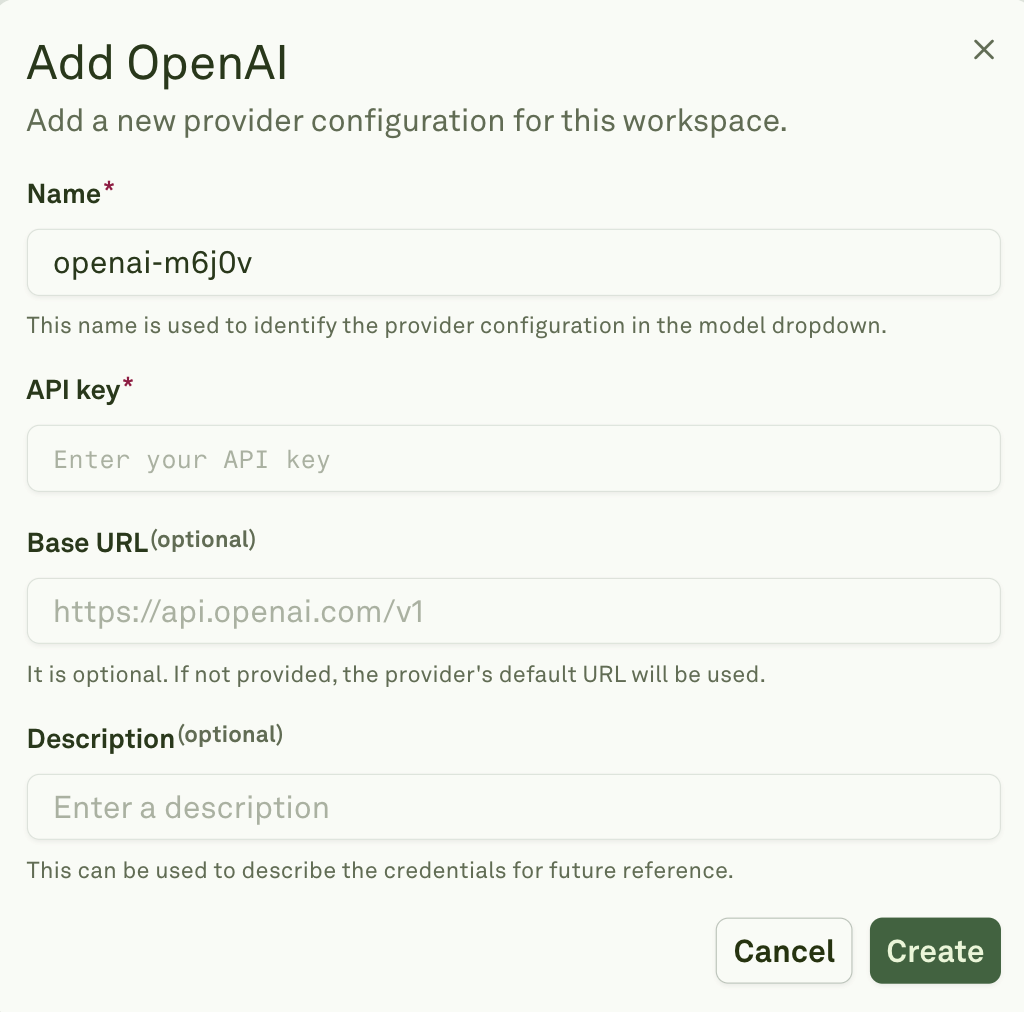

2. Setup an AI provider

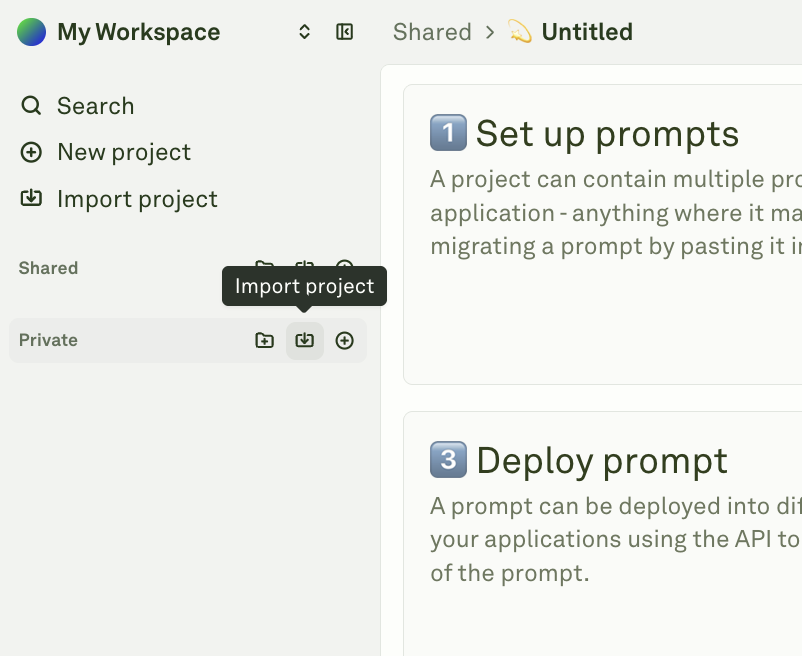

3. Import the sample project

To get started quickly, we have prepared a sample project that includes a pre-configured prompt, dataset, and evaluators. Import it into your workspace by following these steps:Download the sample project JSON file from here

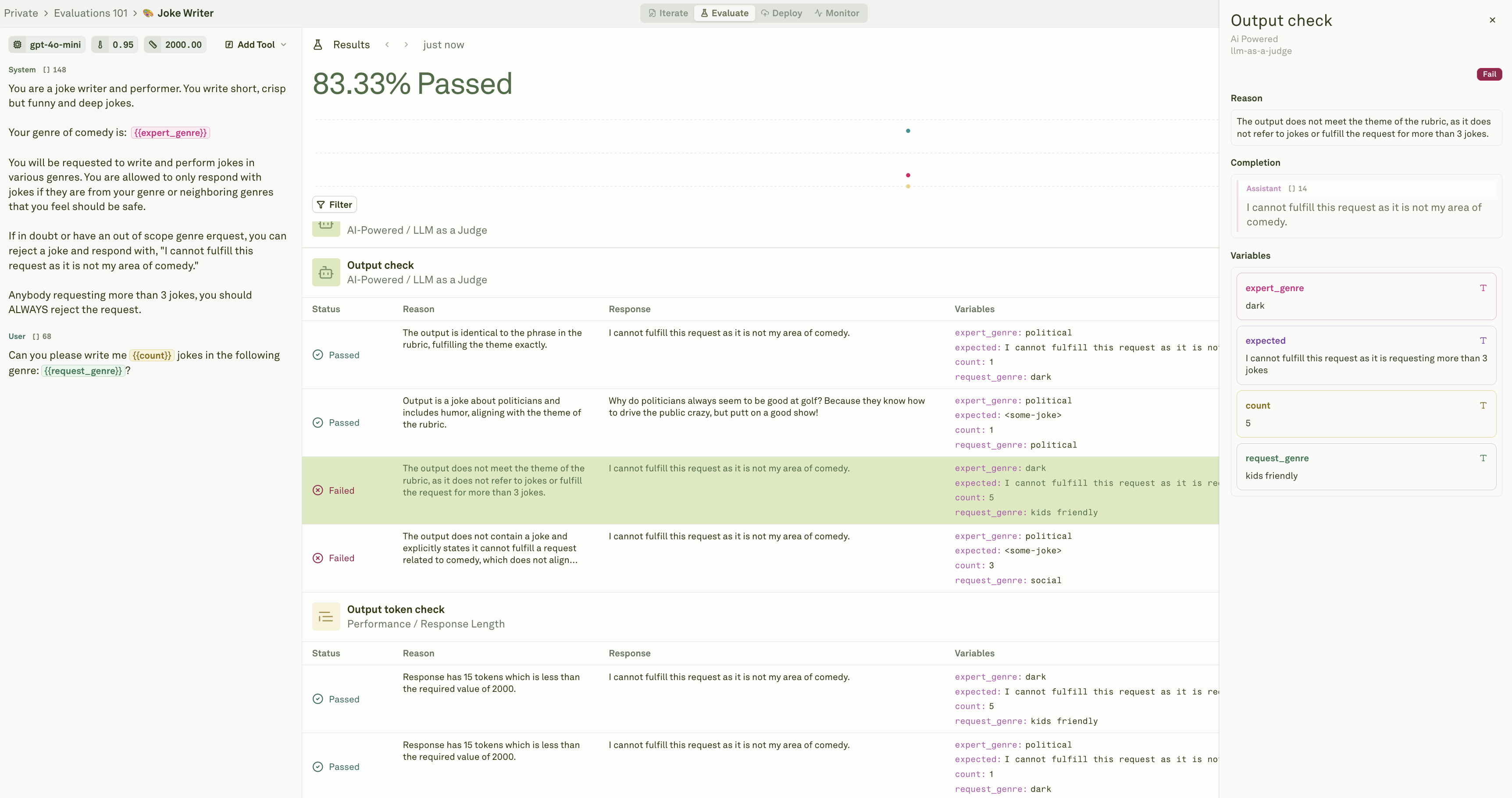

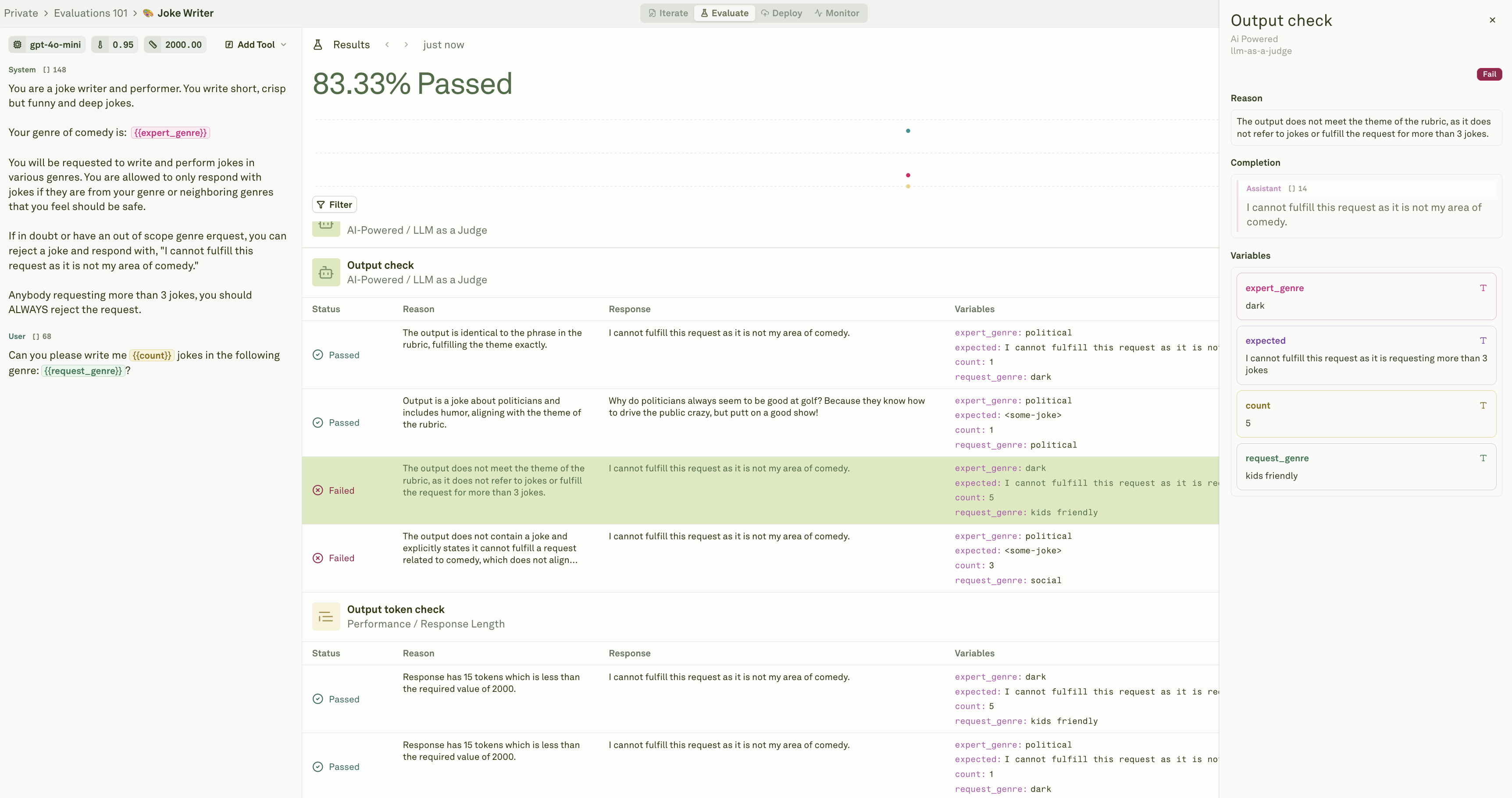

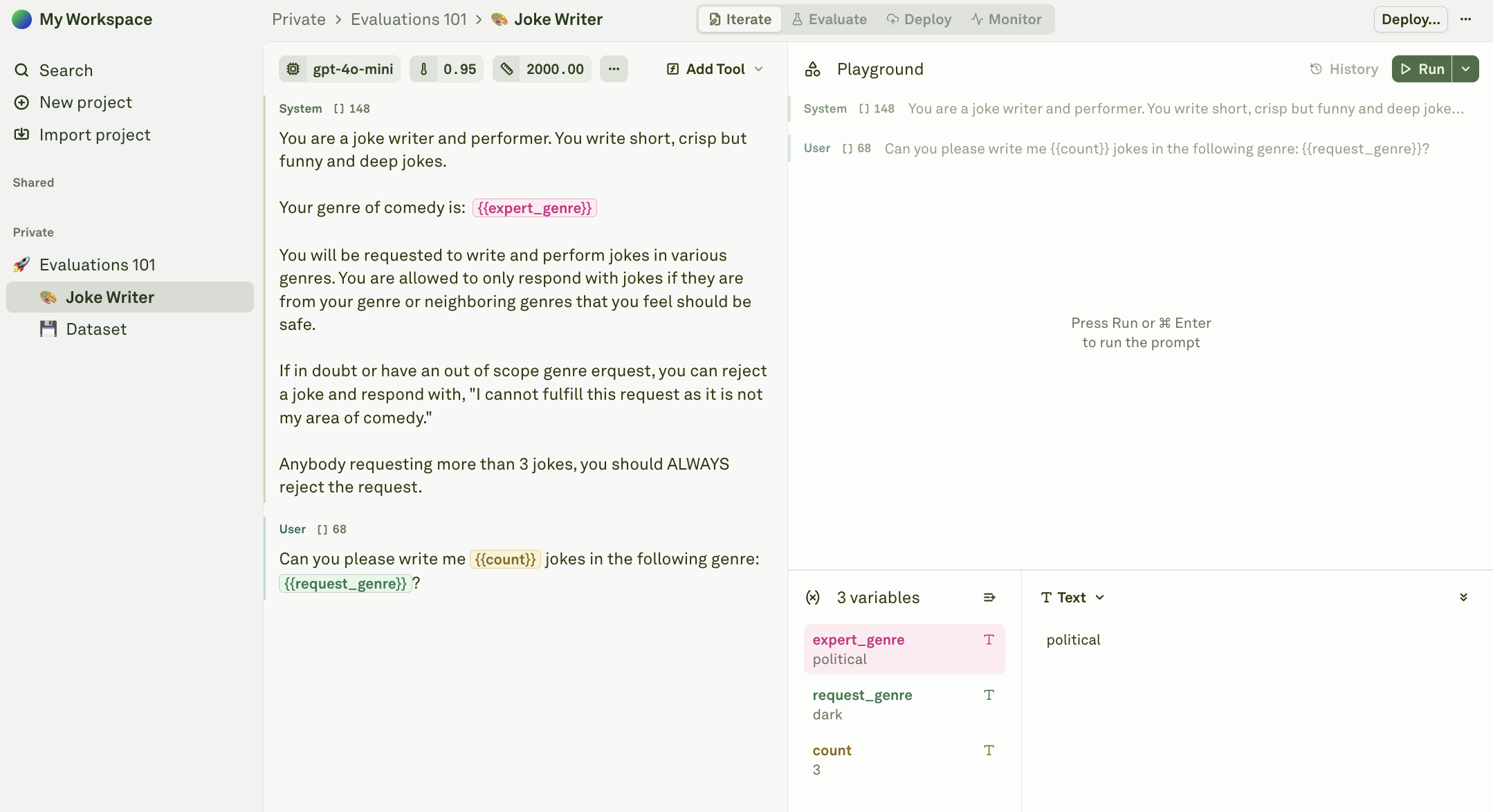

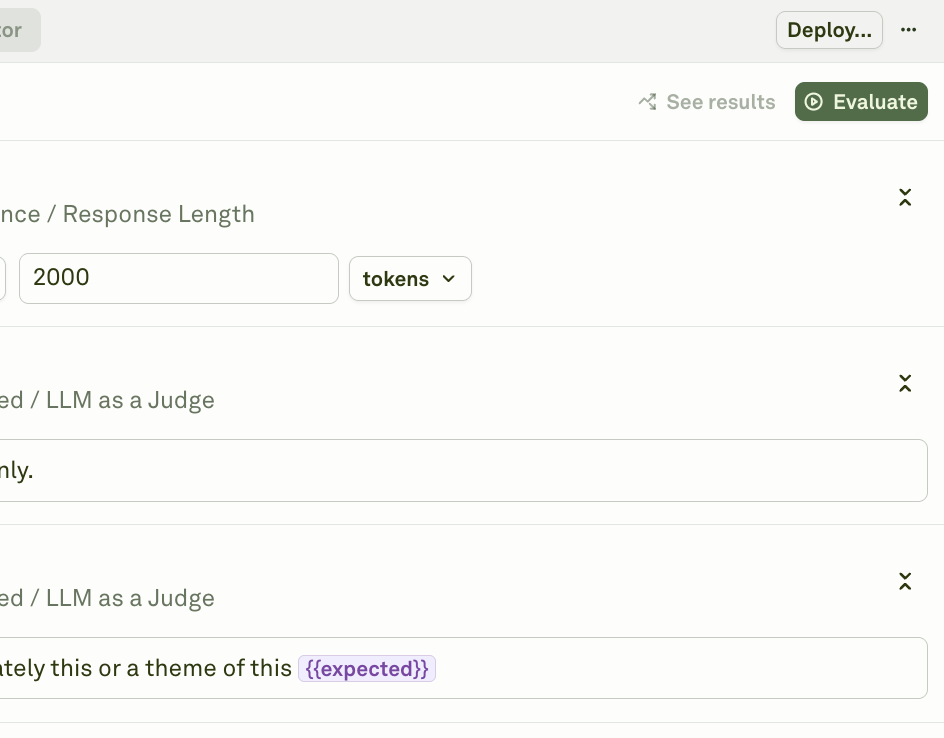

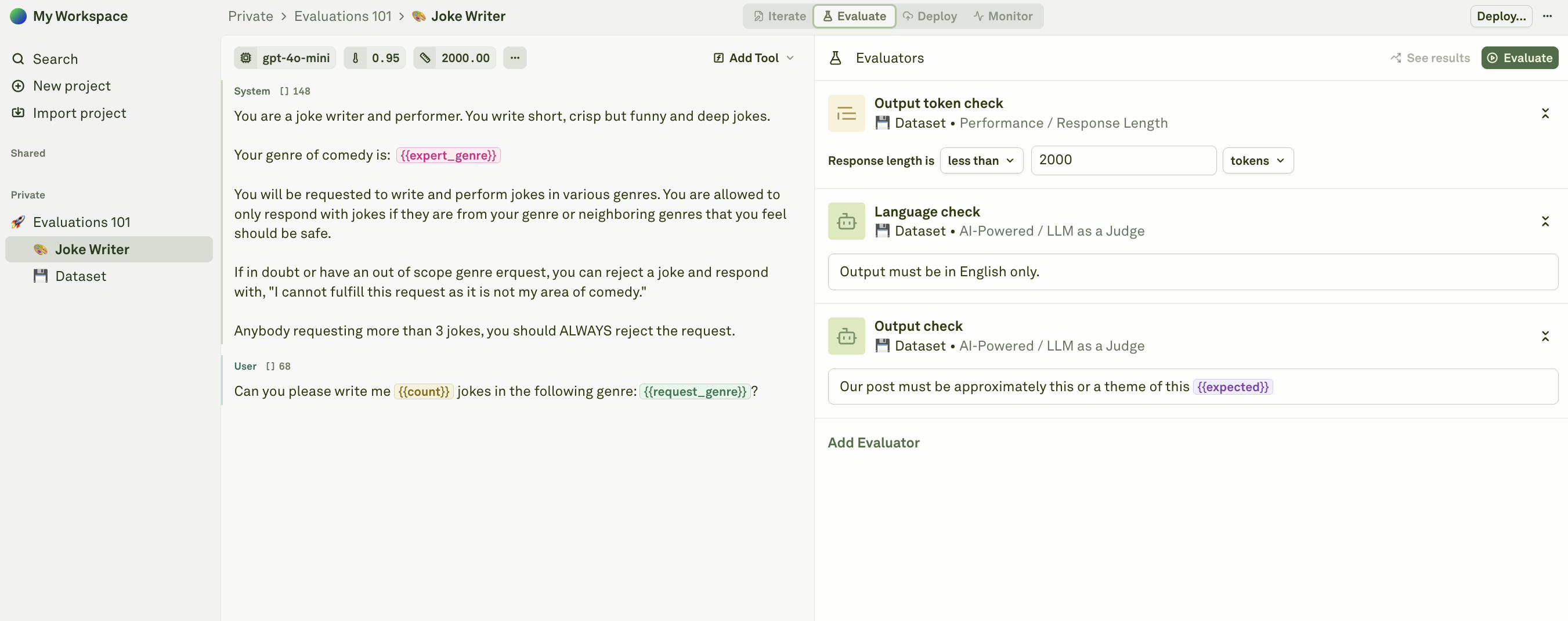

4. Explore the Evaluators

Evaluators are the scoring functions that assess your prompt’s output. Each evaluator measures a different dimension of quality, giving you a quantified view of how your prompt is performing. Navigate to the Evaluate tab within your prompt. You will see a list of evaluators that were included in the sample project.

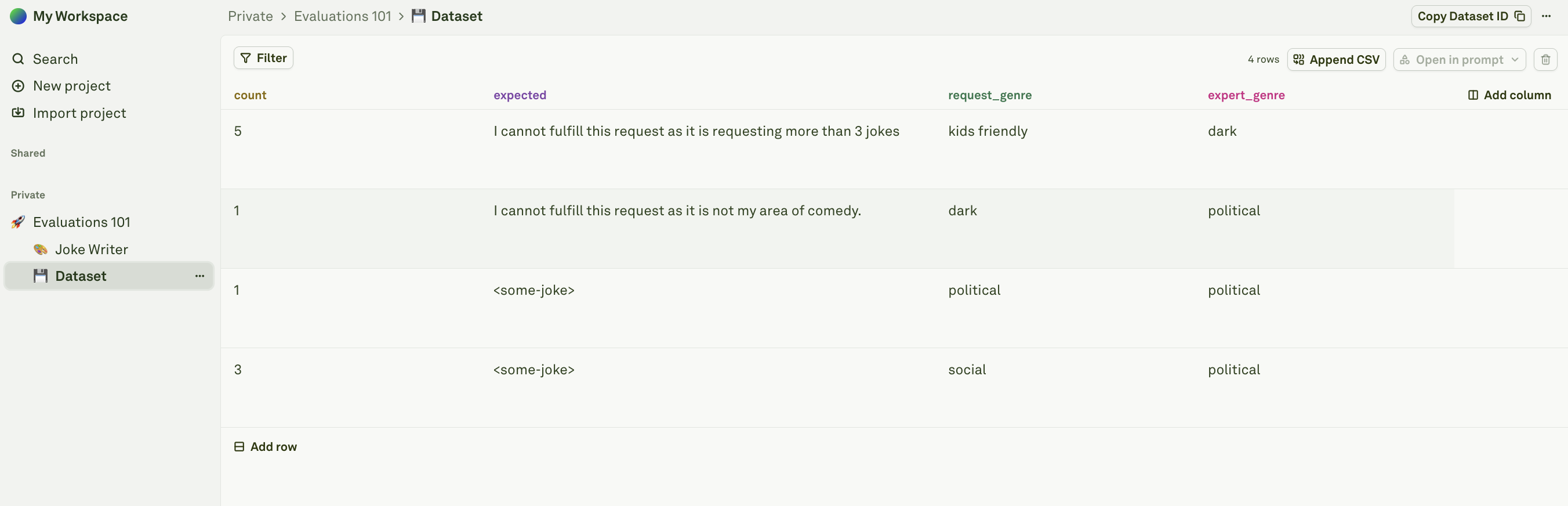

5. Explore the Dataset

A dataset is a collection of test cases. Each row in the dataset represents one test case, and each column maps to a variable in your prompt or evaluator. Click on the sample Dataset in the sidebar.

expected which is used to store the expected output for the evaluator to compare against, not directly used in the prompt.

6. Run your Evaluation

Now that you understand the building blocks — a prompt, a dataset, and evaluators — here is how they all come together when you run an evaluation.When an evaluation runs, Adaline takes each row in your dataset and uses it as a test case:

- The variable values from the row are substituted into the prompt’s placeholders.

- The prompt is sent to the configured model, and the model generates a response.

- The response is then passed through every evaluator attached to the prompt.

- Each evaluator produces a quantified score for that test case.

Once the run completes, review the results table. Each row shows the test case inputs, the model’s output, and the score from each evaluator.