When you run evaluations in the Evaluate pillar, you test against a fixed dataset that may not cover every production scenario. Continuous evaluations solve this by automatically assessing the quality of your deployed prompts on live traffic — catching regressions and new failure patterns as they happen.Documentation Index

Fetch the complete documentation index at: https://www.adaline.ai/docs/llms.txt

Use this file to discover all available pages before exploring further.

How it works

Once configured, continuous evaluations automatically:- Sample incoming spans — A percentage of LLM spans associated with your prompt are selected based on the sample rate.

- Run evaluators — The sampled spans’ output is evaluated against the evaluators you configured for the prompt.

- Store results — Evaluation scores are attached to the spans and visible in the logs and charts.

Configure continuous evaluations

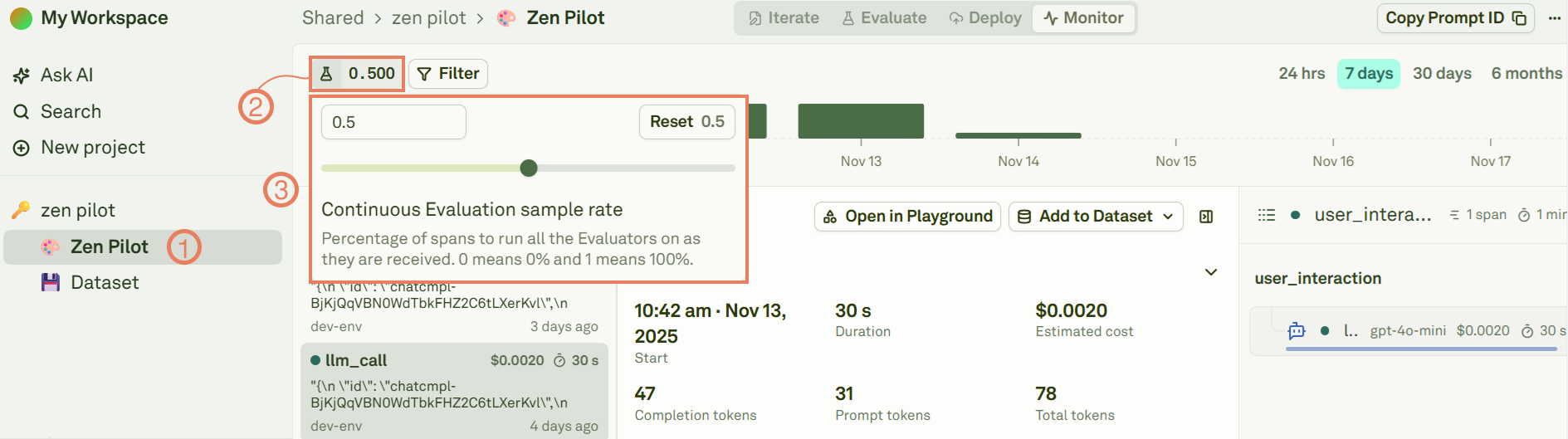

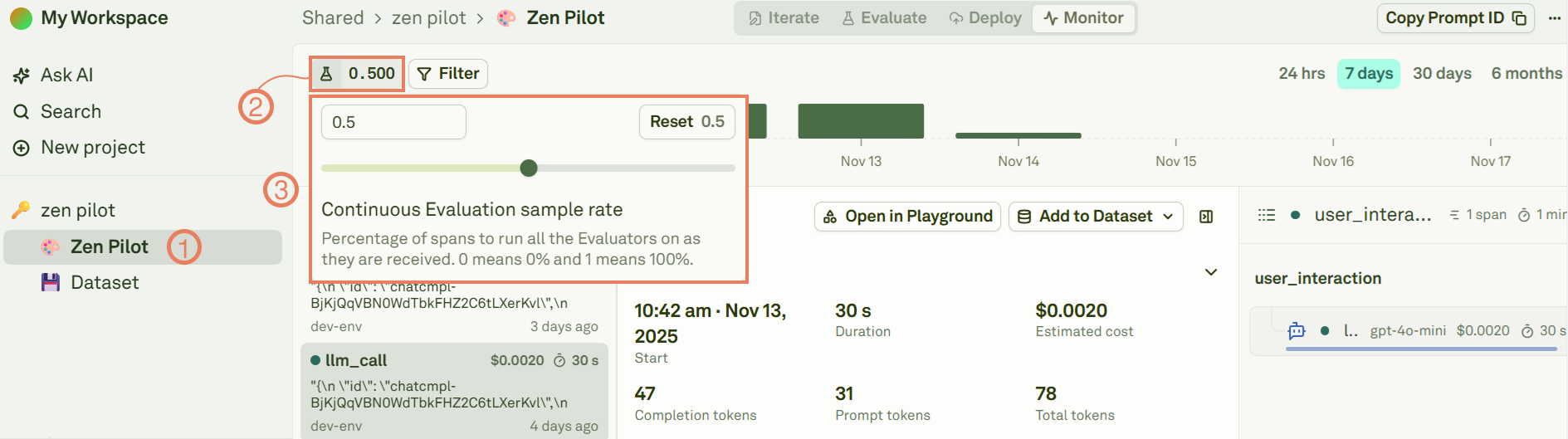

Select a prompt

Navigate to the Monitor section of a monitored project and select the prompt you want to continuously evaluate.

Set the sample rate

Define the continuous evaluation sample rate — a value between 0 and 1:

| Sample rate | Behavior |

|---|---|

| 0 | No logs are evaluated. Continuous evaluation is disabled. |

| 0.5 | 50% of incoming spans are randomly sampled and evaluated. |

| 1 | 100% of incoming spans are evaluated. |

Configure evaluators

Set up the evaluators that will run on sampled spans. See Setup Evaluators for the available evaluator types:

- LLM-as-a-Judge — Assess response quality against a rubric.

- JavaScript — Validate output format and business logic.

- Text Matcher — Check for required or banned patterns.

- Cost, Latency, Response Length — Track operational metrics.

Model (LLM calls) will have their output values evaluated against the configured evaluators, adhering to the sample rate.

Override the sample rate

You can force evaluation on a specific span regardless of the sample rate. This is useful for high-priority requests that you always want evaluated, or for testing your evaluator configuration on specific requests.- TypeScript SDK

- Python SDK

- REST API

- Proxy

Set You can also enable it after creation:See the Span class reference for the full API.

runEvaluation: true when creating a span or via the update method:View results

Continuous evaluation results appear in multiple places:| Location | What you see |

|---|---|

| Span details | Evaluation score and reason attached to each evaluated span. |

| Charts | The Avg eval score chart shows quality trends over time. |

| Trace view | Evaluated spans are marked with their score in the trace tree. |

Best practices

- Start with a low sample rate — Begin at 0.1–0.2 to validate your evaluator configuration before scaling up.

- Use representative evaluators — Choose evaluators that measure the dimensions most important to your use case.

- Monitor the eval score chart — Watch the Avg eval score chart for trend changes that indicate quality regressions.

- Combine with alerts — Set up alerts to get notified when eval scores drop below a threshold.

- Iterate on evaluators — Refine your evaluator rubrics and thresholds based on what you observe in production.

Next steps

Build Datasets from Logs

Capture production cases for offline evaluation.

Use Logs to Fix Prompts

Debug and improve prompts using production insights.