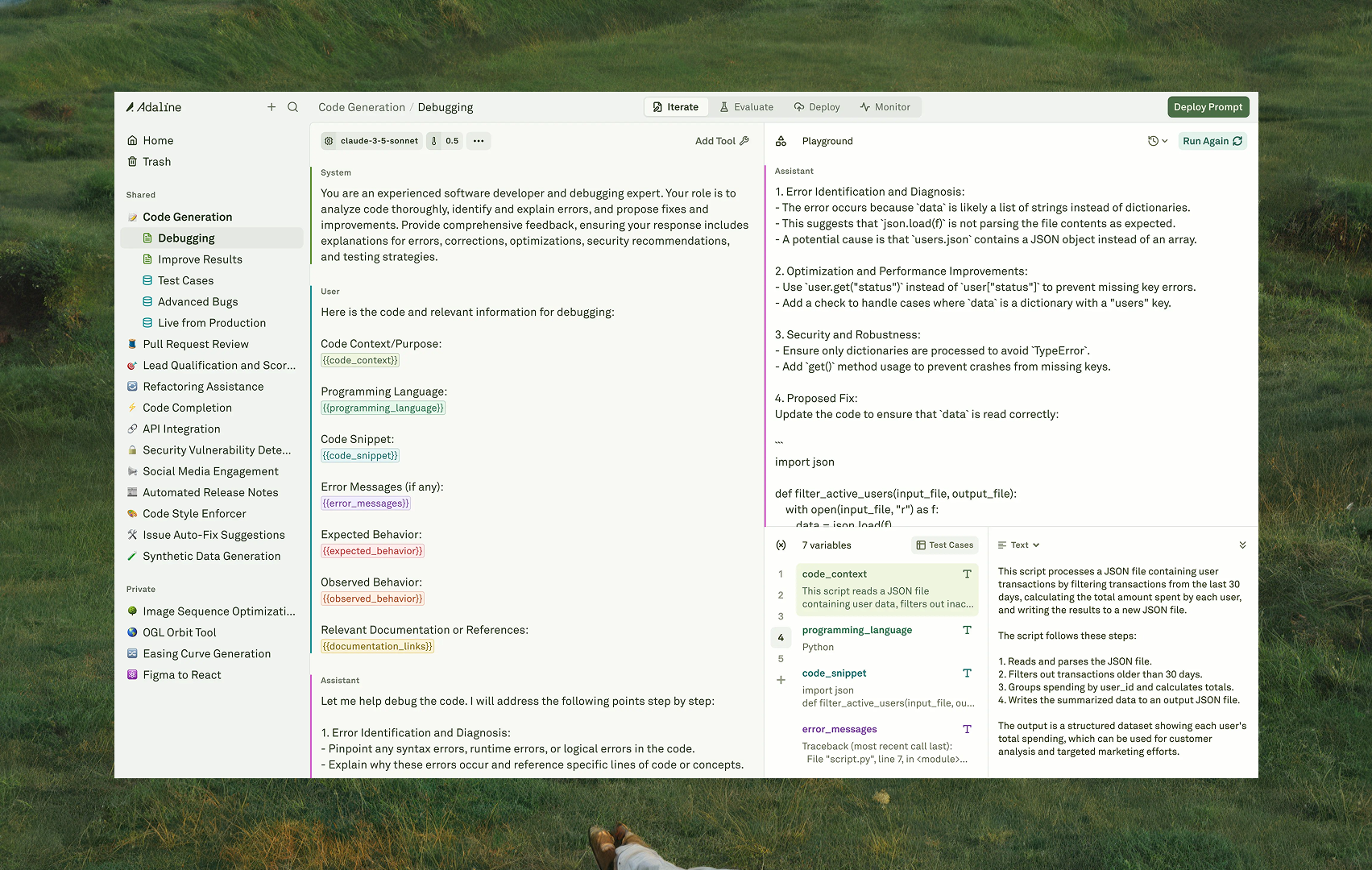

The Iterate pillar is where product and engineering teams come together to design, develop, and refine prompts in a collaborative environment. It covers the entire prompt creation lifecycle — from selecting a model and composing messages through adding dynamic variables and testing in a live playground. The workflow is: configure your model, compose your prompt with roles and content, wire up variables and tools, then run and iterate in the Playground until you are satisfied with the output.Documentation Index

Fetch the complete documentation index at: https://www.adaline.ai/docs/llms.txt

Use this file to discover all available pages before exploring further.

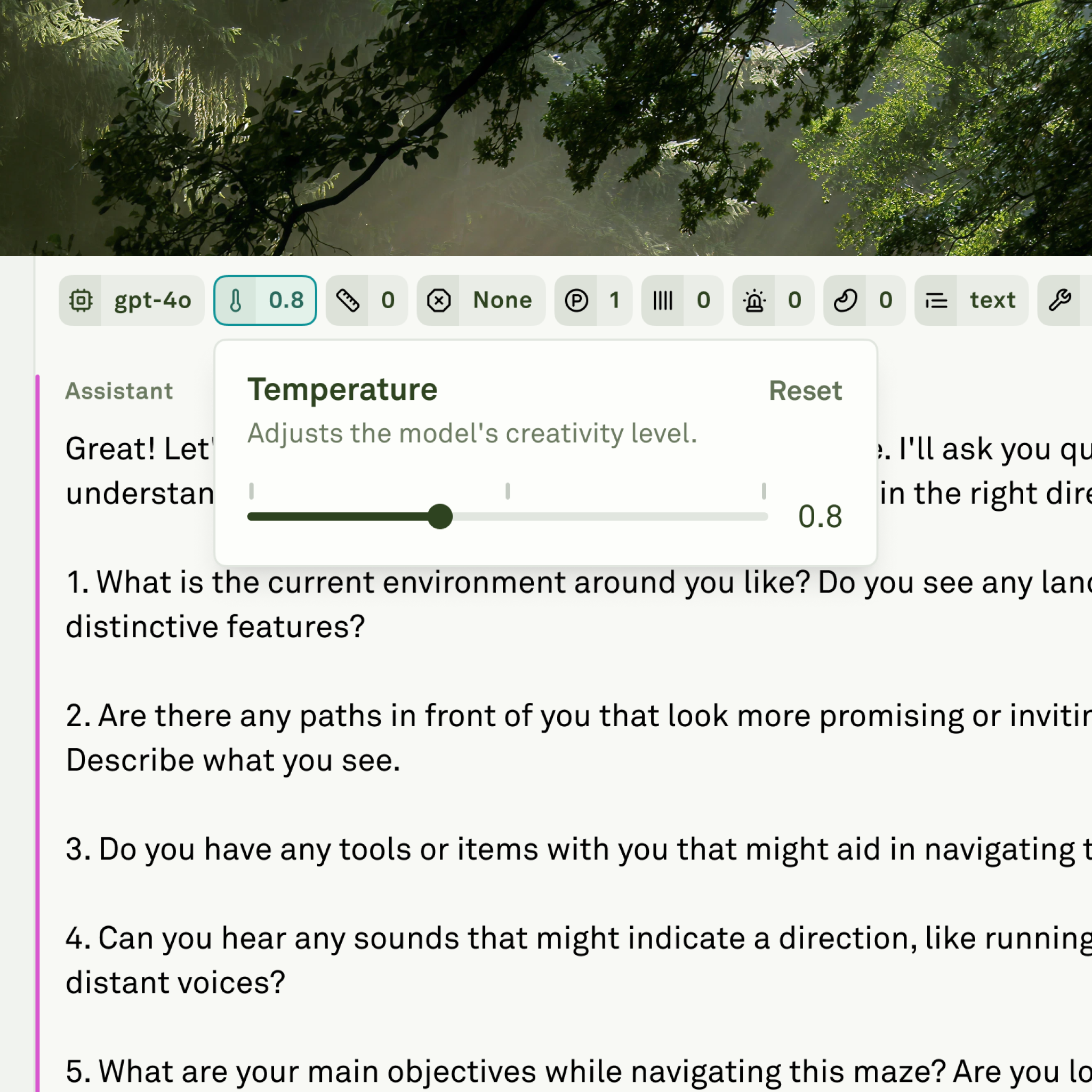

Model parameters

Every prompt starts with a model. The Editor displays all supported LLMs based on the providers configured in your workspace — OpenAI, Anthropic, Google, and others. Once you select a model, you can fine-tune generation settings like temperature, max tokens, top-p, and frequency/presence penalties to control how the model behaves. You can also configure a response format to constrain the model’s output to free-form text, a JSON object, or a strict JSON schema.

Prompt composition

Prompts in Adaline are built from messages. Each message has a role — system, user, assistant, or tool — and a content block that holds the actual payload. The role determines how the model treats the message, while the content block holds what is sent — whether that is text, images, PDFs, or a combination.| Content type | What it supports |

|---|---|

| Text | The foundational content type. Write instructions, add /* comments */ for team annotations (stripped before sending), and embed {{variables}} for dynamic inputs. |

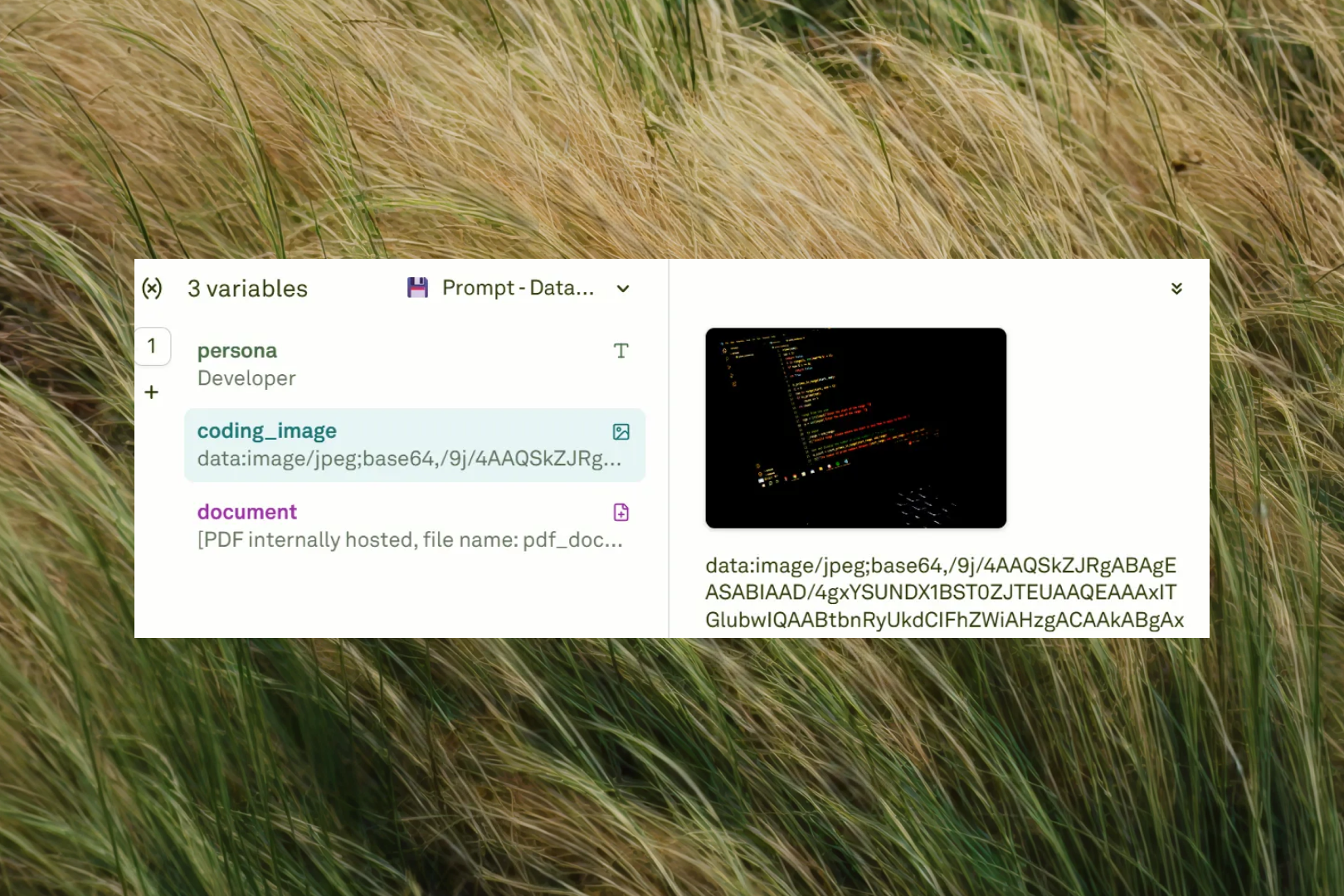

| Images | Attach images via upload, URL, or image variables for vision-capable models. Each message can contain multiple images. |

| PDFs | Attach PDF documents as context for analysis, summarization, or extraction tasks. |

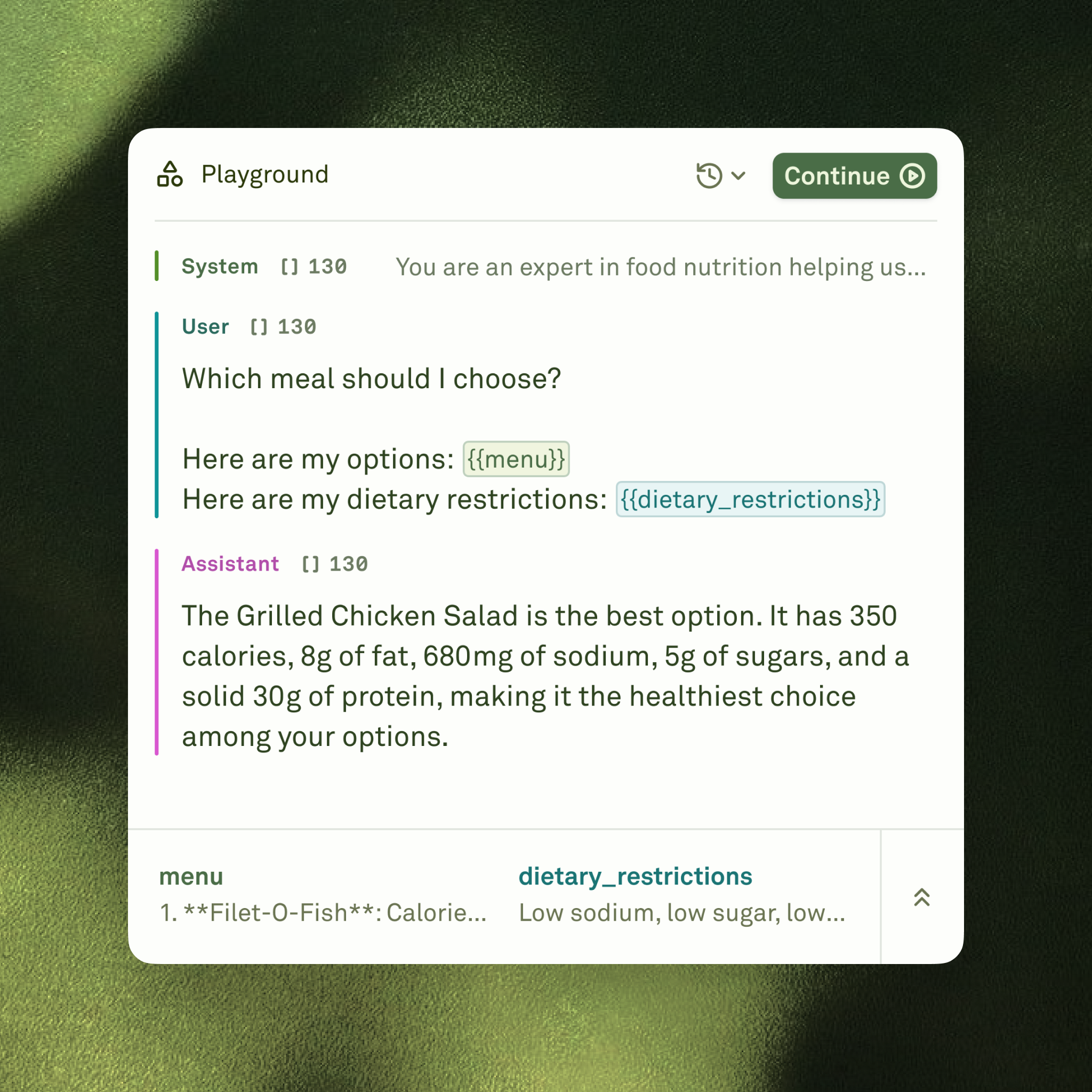

Variables

Variables make your prompts dynamic and reusable. Define a variable by wrapping its name in double curly braces —{{variable_name}} — anywhere in your message text, and it automatically appears in the Variable Editor where you can set its value. Beyond static text and image variables, Adaline supports two advanced variable types that unlock powerful workflows:

- API variables — Fetch live data from external HTTP endpoints at runtime. Configure an API source with a URL, method, headers, and body, and the response is injected directly into your prompt context when the prompt executes.

- Prompt variables — Chain prompts together by using the output of one prompt as the input to another. This enables modular, agent-like workflows where each step can be independently authored, tested, and refined.

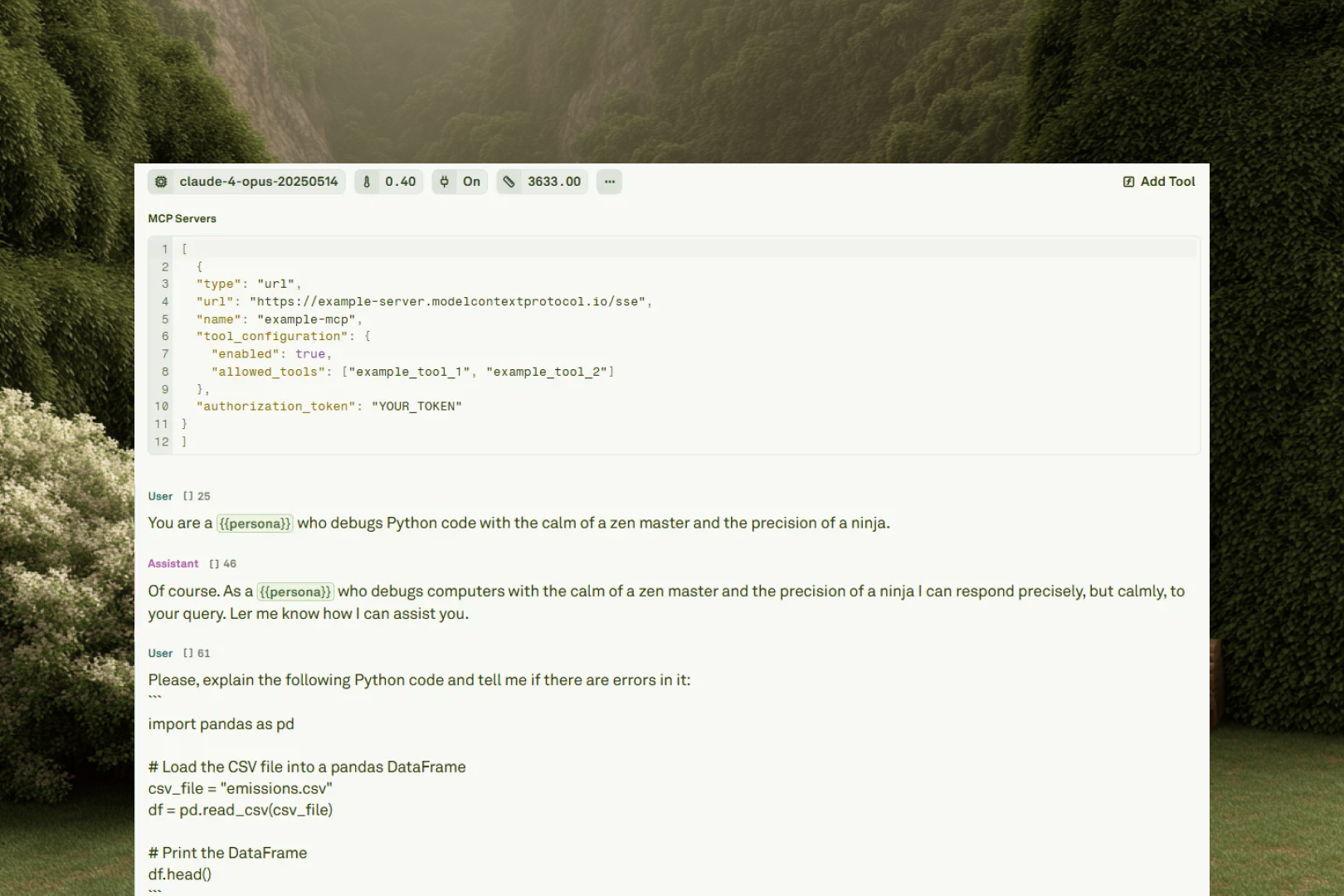

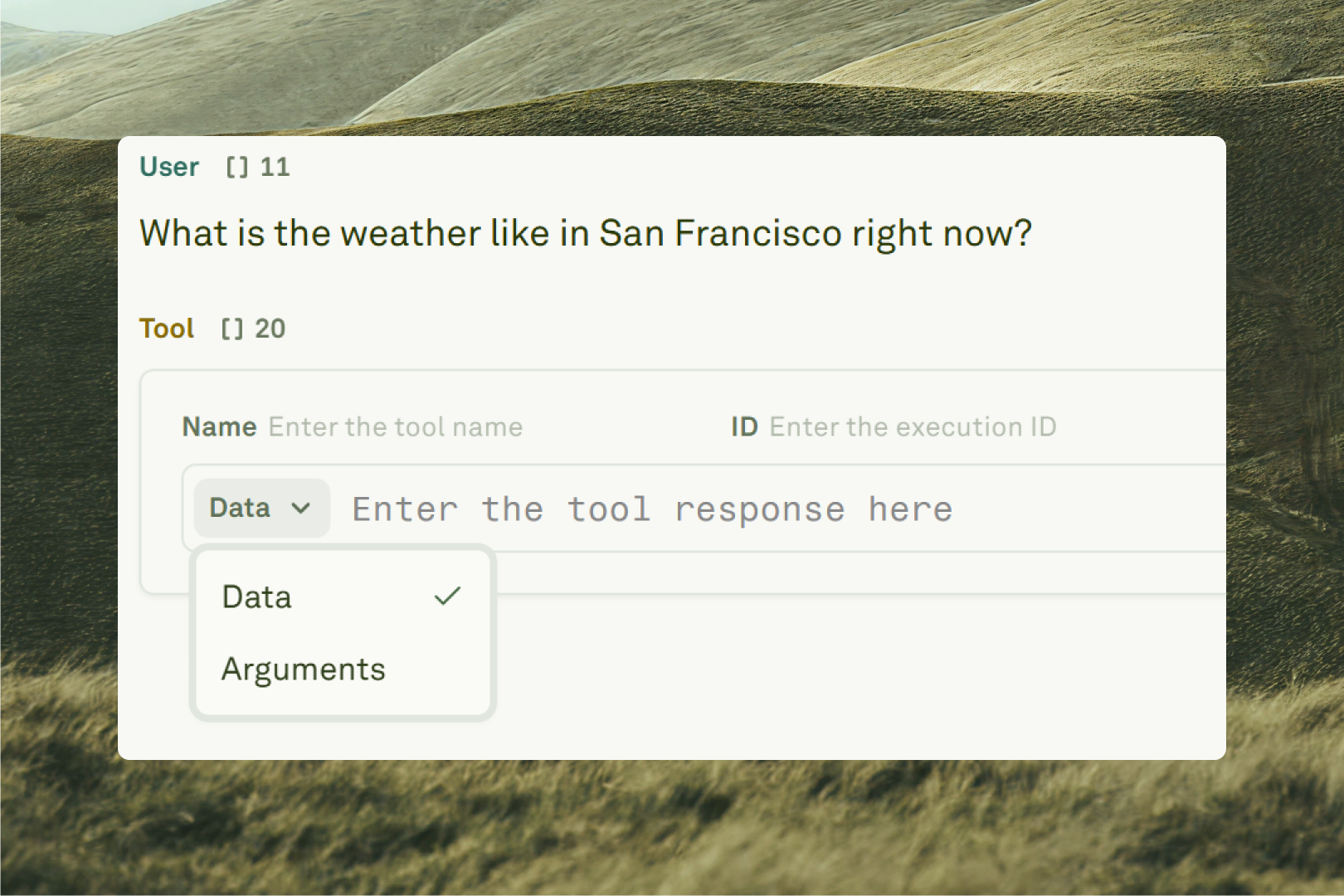

Tools and MCP

Playground

- Run prompts — Execute your prompt, add follow-up messages, and switch between models to compare how different LLMs handle the same input.

- View past runs — Access a versioned history of every playground execution. Restore any previous state to recover a good result or compare outputs across configurations.

- Test tool calls — Handle tool call responses manually or enable auto tool calls for fully automated multi-turn conversations with tool-equipped prompts and MCP servers.

- Link datasets — Connect datasets to your prompt to cycle through structured variable samples at scale. Each row in the dataset represents a different test case — select a row and run to test your prompt with that specific combination.

Use Parameters in Prompts

Select an LLM and fine-tune its generation settings.

Use Roles in Prompts

Structure prompts with role-based messages.

Use Variables in Prompts

Create dynamic, reusable prompt templates.

Run Prompts in Playground

Test your prompts interactively with real inputs.