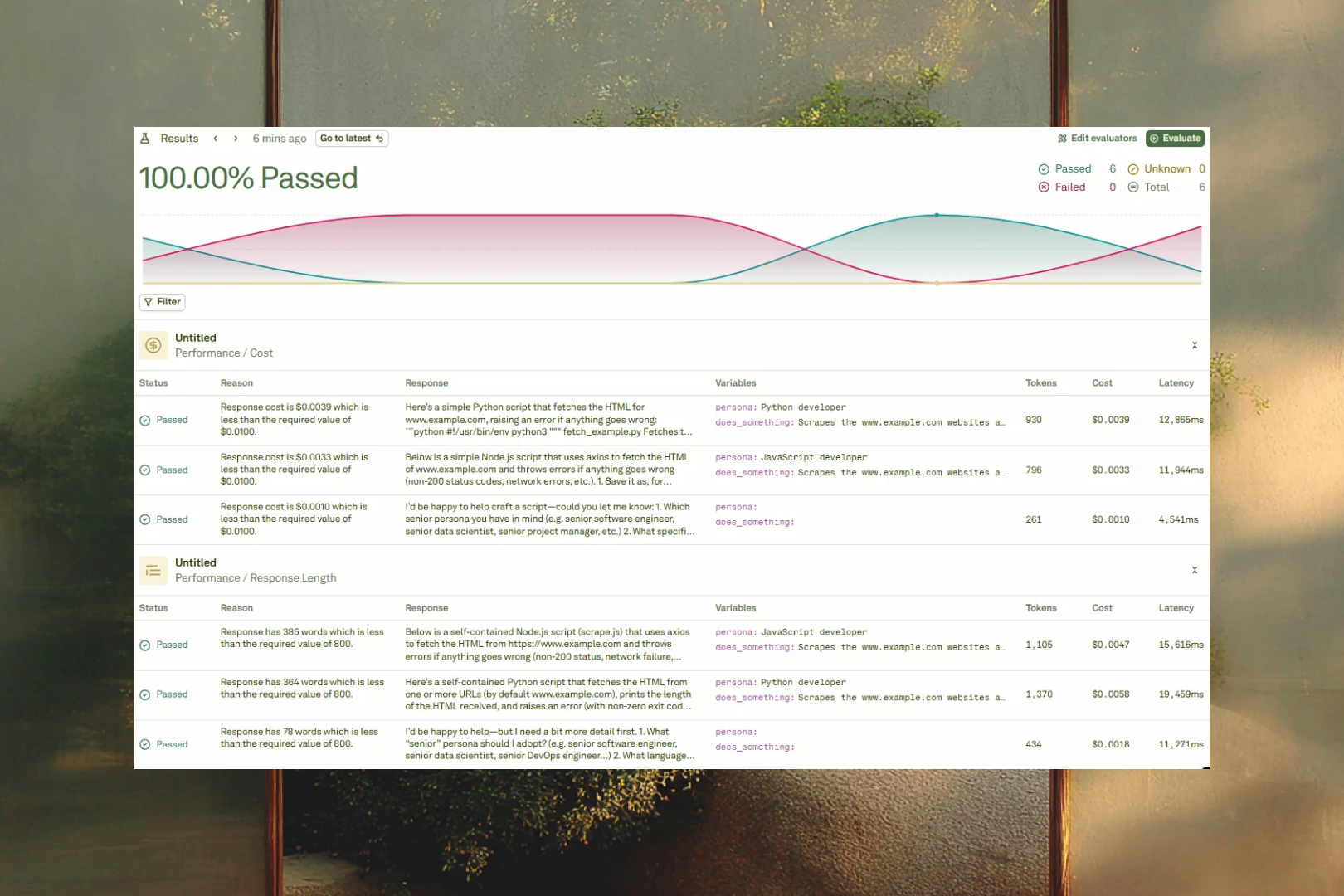

The Evaluate pillar is your quality assurance center for prompts. It lets you test prompts against real-world scenarios at scale, measure their effectiveness with configurable evaluators, and identify areas for improvement before deploying to production. The workflow is: build a dataset of test cases, configure evaluators that define your quality criteria, run evaluations to score every test case, and analyze the results to drive improvements.Documentation Index

Fetch the complete documentation index at: https://www.adaline.ai/docs/llms.txt

Use this file to discover all available pages before exploring further.

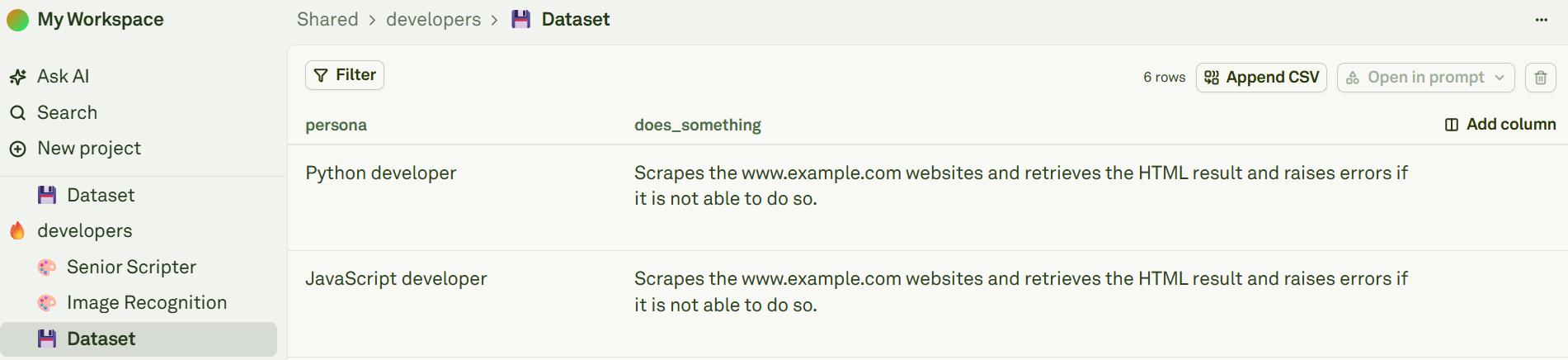

Datasets

{{user_question}} and {{context}}, your dataset needs columns named user_question and context. Extra columns are silently ignored, but missing columns will cause the evaluation to fail.

You can populate datasets by typing values manually, importing CSV files for bulk data, or building them from production logs captured by the Monitor pillar. Datasets also support dynamic columns — columns configured as API variables or prompt variables that fetch live data at runtime instead of storing static values. When an evaluation runs, dynamic columns are automatically resolved with fresh values before scoring begins.

Setup Dataset covers creating datasets, column-to-variable mapping rules, all population methods, and dynamic column configuration in detail.

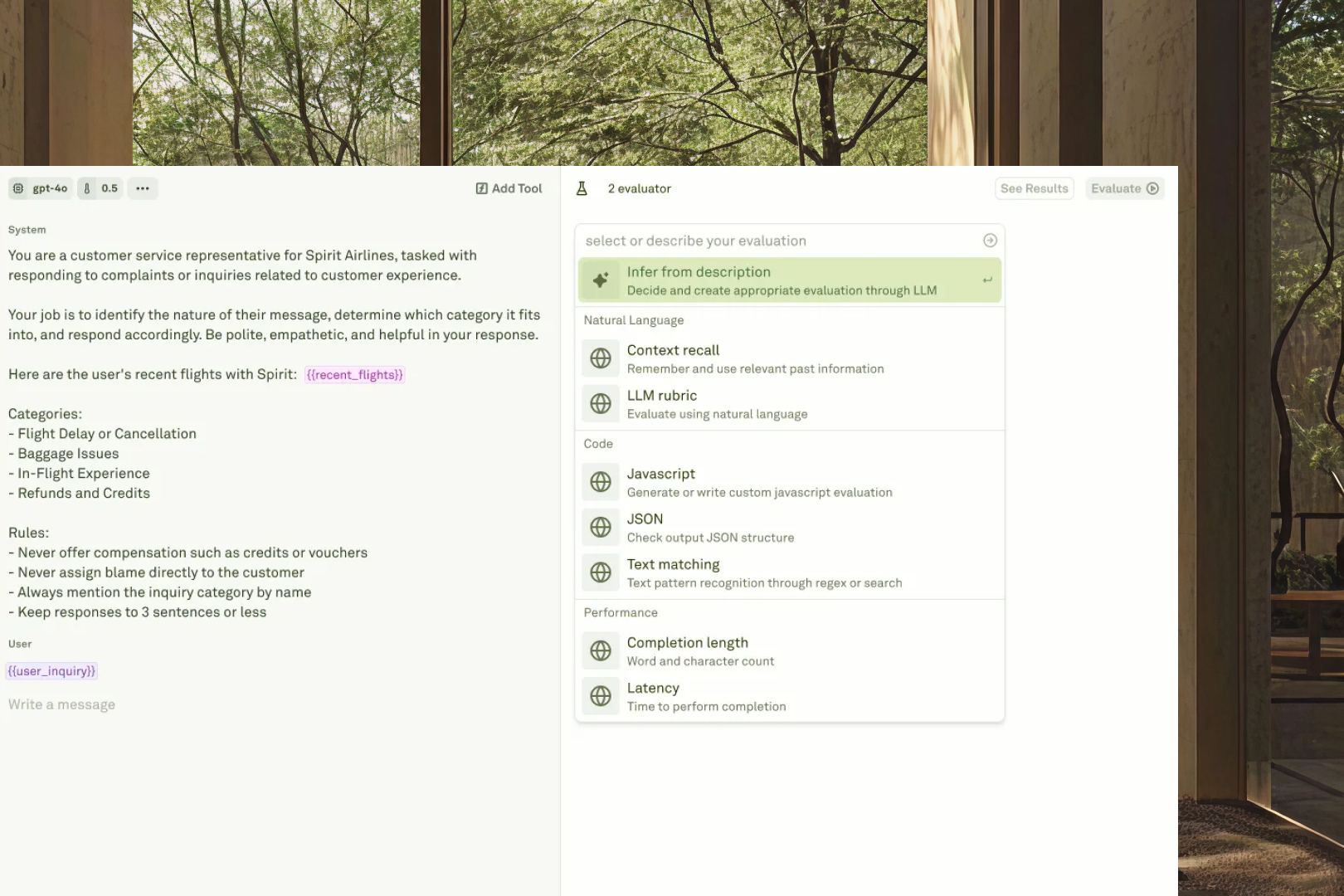

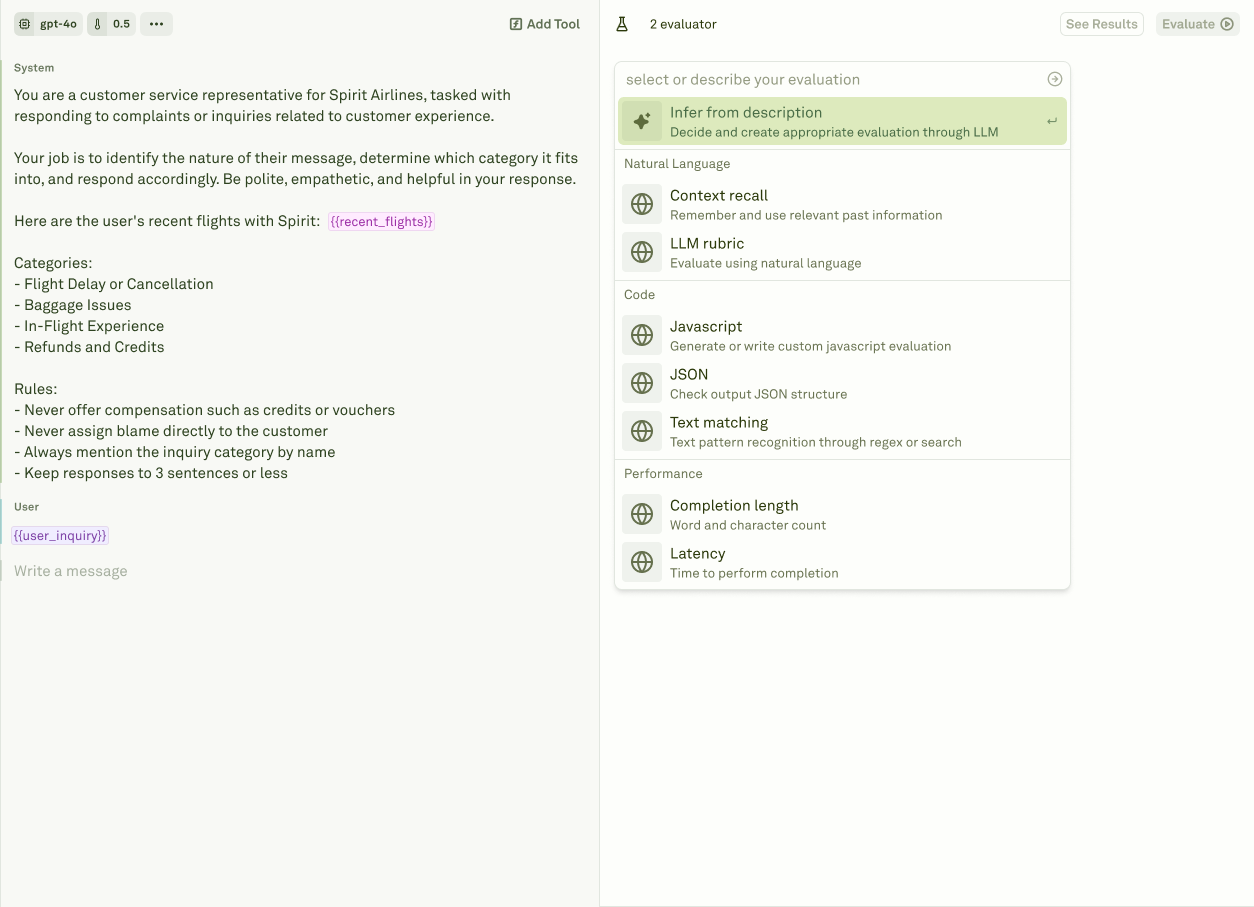

Evaluators

| Evaluator | What it does |

|---|---|

| LLM-as-a-Judge | Uses an LLM to assess response quality against a custom rubric you define. The most versatile evaluator — ideal for qualitative assessment where nuanced judgment matters. |

| JavaScript | Lets you write custom code to validate structured outputs, enforce business rules, check data formats, and implement any evaluation logic expressible in code. |

| Text Matcher | Checks for required keywords, patterns, or regex matches in the response. Supports equals, starts-with, ends-with, contains-any, contains-all, not-contains-any, and regex modes. |

| Cost | Calculates the cost of each response based on actual token usage and provider pricing. Set thresholds to enforce budget caps. |

| Latency | Measures the round-trip response time. Set thresholds to enforce SLA requirements. |

| Response Length | Counts response size in tokens, words, or characters. Set thresholds to enforce brevity or minimum detail requirements. |

Evaluations

- Single prompt — Run a batch evaluation on one prompt across all test cases in the dataset. This is the standard mode for most evaluation workflows.

- Chained prompts — Evaluate multi-step workflows where prompts reference each other through prompt variables. The system executes the full chain for each test case, with cumulative cost and latency tracking across all steps.

- Multi-turn chat — Assess conversational AI systems where response quality depends on context accumulated across multiple user-assistant exchanges.

Setup Dataset

Create and configure datasets for evaluation.

Evaluate Prompts

Run your first evaluation across test cases.

LLM-as-a-Judge

Set up the most versatile evaluator.

Analyze Reports

Review and compare evaluation results.