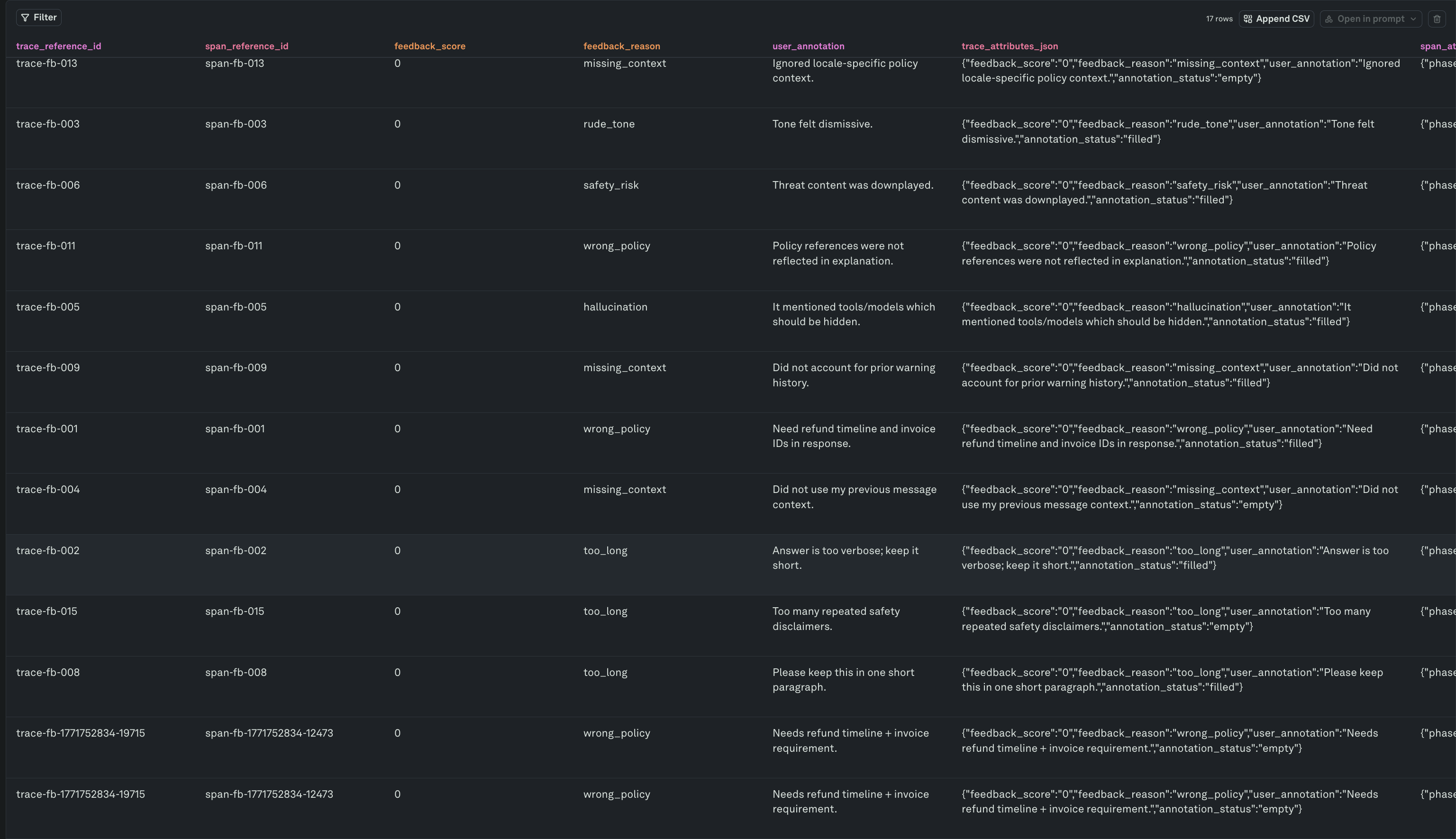

Adding production logs to a dataset captures the raw data — inputs, outputs, and metadata. But raw data alone is not enough for high-quality evaluations. You need human judgment: why a response failed, what the correct answer should have been, and which cases are high priority. Annotation columns and dataset filters let you build a structured review queue so your team can systematically annotate every row, turning a log dump into a curated evaluation suite.Documentation Index

Fetch the complete documentation index at: https://www.adaline.ai/docs/llms.txt

Use this file to discover all available pages before exploring further.

Add annotation columns

When you set up a dataset, you can add columns beyond the prompt’s input and output variables. These extra columns hold human annotations and review metadata that live alongside the production data. Recommended annotation columns:| Column | Type | Purpose |

|---|---|---|

annotation | Free text | Reviewer notes explaining why the response is wrong and what needs to change. |

annotation_status | Label | Tracks whether the row has been reviewed — empty for pending, filled for completed. |

feedback_category | Label | Structured classification like correct, incorrect, hallucination, off-topic, too-long. |

expected_output | Free text | The ideal response a reviewer writes by hand, used as a reference for LLM-as-a-Judge evaluations. |

priority | Label | Urgency flag — high, medium, low — so reviewers can triage effectively. |

annotation_status column is the key to building a review queue. Every row added from Monitor starts with annotation_status = empty, signaling that it needs human review. Once a reviewer fills in their annotations, they set the status to filled.

Build a review queue

The review queue is simply your dataset filtered to show only rows where annotation columns are still empty. This gives reviewers a focused list of exactly the rows that need attention — no searching, no guesswork.Filter for unannotated rows

Open your dataset and apply a filter on theannotation_status column:

annotation_status = empty— Shows all rows that have been added from Monitor but not yet reviewed by a human.

annotation_status = filled, rows disappear from the filtered view automatically.

Work through the queue

For each row in the queue:- Read the input and output — Understand what the user asked and what the model produced.

- Classify the issue — Set

feedback_categoryto a structured label (hallucination,incorrect,off-topic, etc.) so you can analyze failure patterns later. - Write the annotation — Explain in

annotationwhat went wrong and what the correct behavior should be. - Write the expected output (optional) — If the row will be used for LLM-as-a-Judge evaluations, write the ideal response in

expected_output. - Set priority (optional) — Flag rows that need urgent prompt fixes.

- Mark as filled — Set

annotation_status = filledto remove the row from the queue.

You can also filter by

feedback_category or priority to focus on specific failure types or urgent cases. Combining filters lets different reviewers own different slices of the queue.End-to-end workflow

Annotation works best as a recurring loop that connects monitoring, human review, and evaluation:Filter logs in Monitor

Use filters to find the logs that matter — failures, low eval scores, negative user feedback, or edge cases.

Add to dataset

Add selected spans to a dataset. The span’s input variables and output are mapped to dataset columns automatically. New rows arrive with

annotation_status = empty.Review the queue

Open the dataset and filter for

annotation_status = empty. This is your team’s review backlog. Work through it by filling annotation columns and marking rows as filled.Run evaluations

Evaluate your prompt against the annotated dataset. Rows with

expected_output filled in can be scored by LLM-as-a-Judge evaluators that compare the model’s response against your reference answer.Fix and redeploy

Use evaluation results to iterate on your prompt. Once fixes pass evaluation, deploy the improved prompt. The annotated rows stay in the dataset as permanent regression tests.

Next steps

Build Datasets from Logs

Add production spans to datasets for evaluation.

Setup Dataset

Create and configure datasets with annotation columns.

Evaluate Prompts

Run evaluations against your annotated datasets.

Use Logs to Improve Prompts

Debug and fix issues found in production logs.