Tools let your LLM interact with external services, databases, and APIs during a conversation. When a model determines it needs external data or actions, it generates a structured tool call request that can be executed to fetch results and continue the conversation. Adaline supports the full tool calling workflow — from defining tool schemas to configuring automatic execution with custom backends.Documentation Index

Fetch the complete documentation index at: https://www.adaline.ai/docs/llms.txt

Use this file to discover all available pages before exploring further.

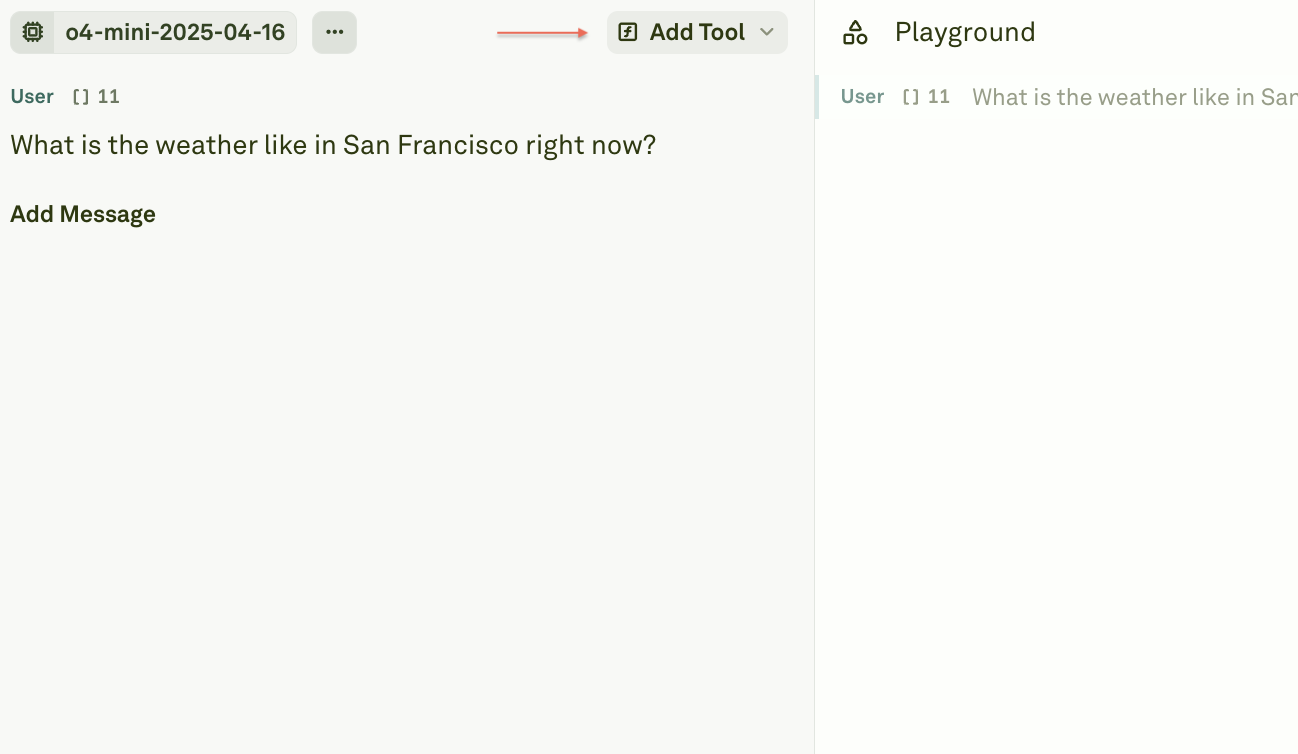

Add tools to a prompt

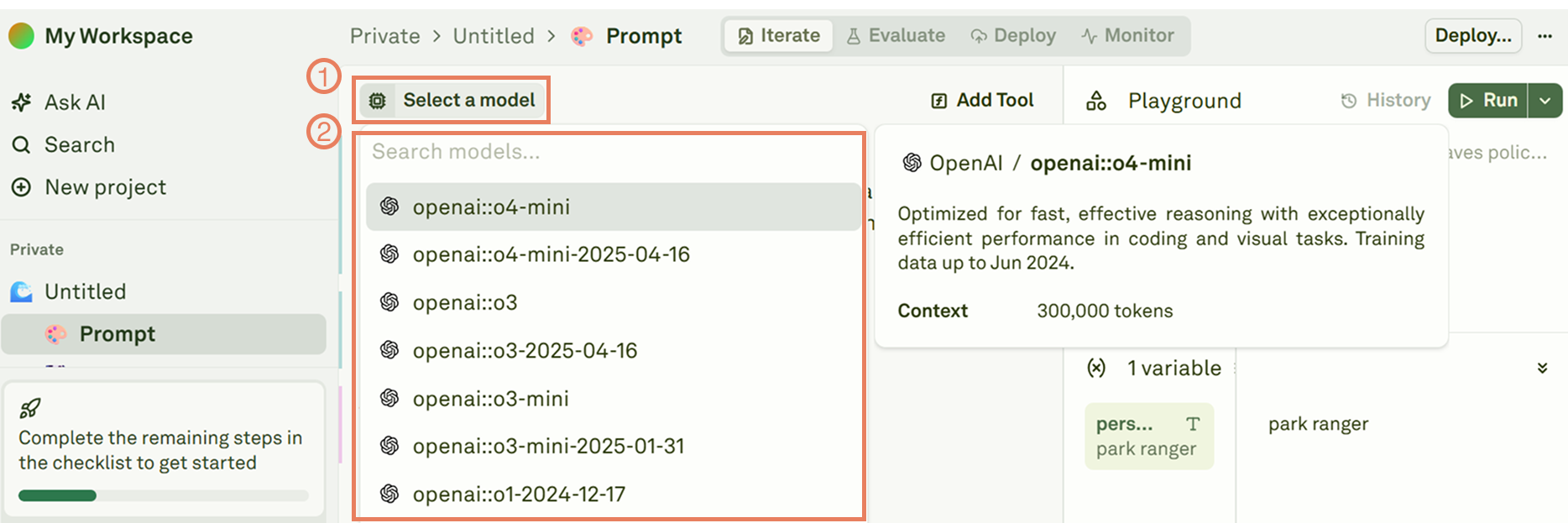

Select a compatible model

Choose an LLM that supports tool calling (function calling). Most modern models from OpenAI, Anthropic, and Google support this feature.

Write your prompt

Compose a prompt that may require external data or actions. For example, a prompt asking about current weather conditions would benefit from a weather API tool.

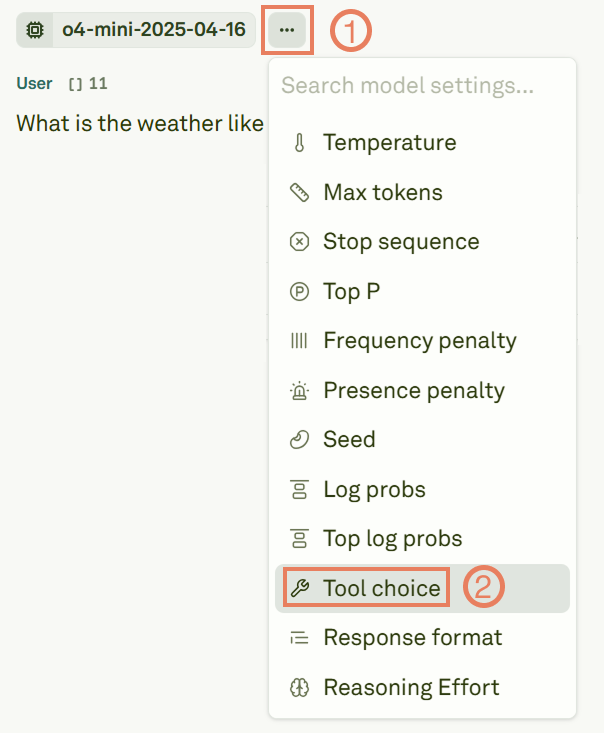

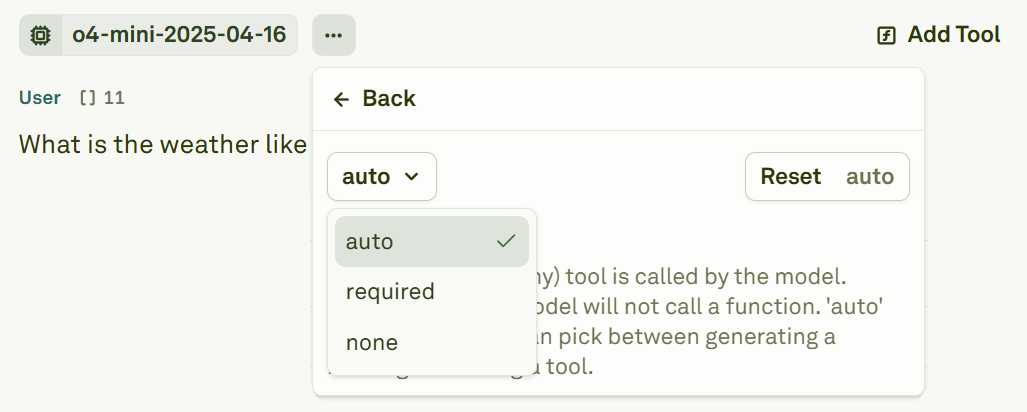

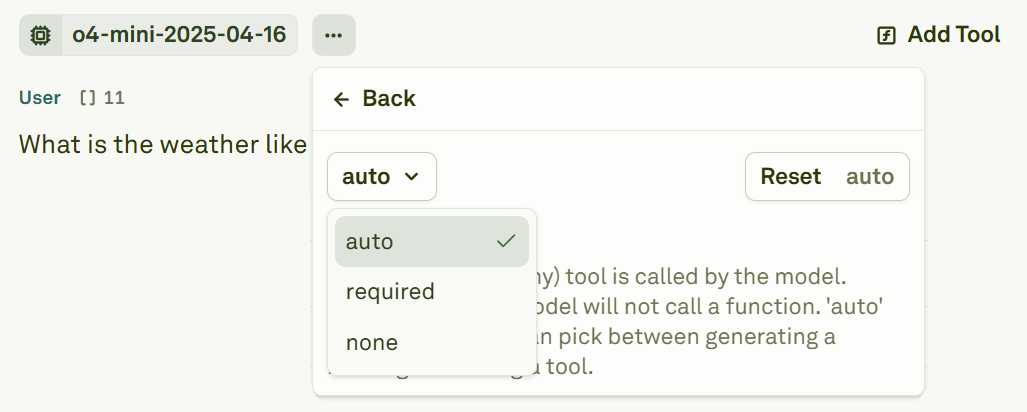

Enable tool choice

Enable the tool choice feature in the model settings to allow the LLM to generate tool calls.

Configure tool choice mode

Set the tool choice mode to control how the model uses your tools.

| Mode | Behavior |

|---|---|

| none | The model will not invoke any tools. |

| auto | The model decides which tools to use and when, based on the conversation context. |

| required | The model must invoke at least one tool in its response. |

| any | The model can call any of the available tools. |

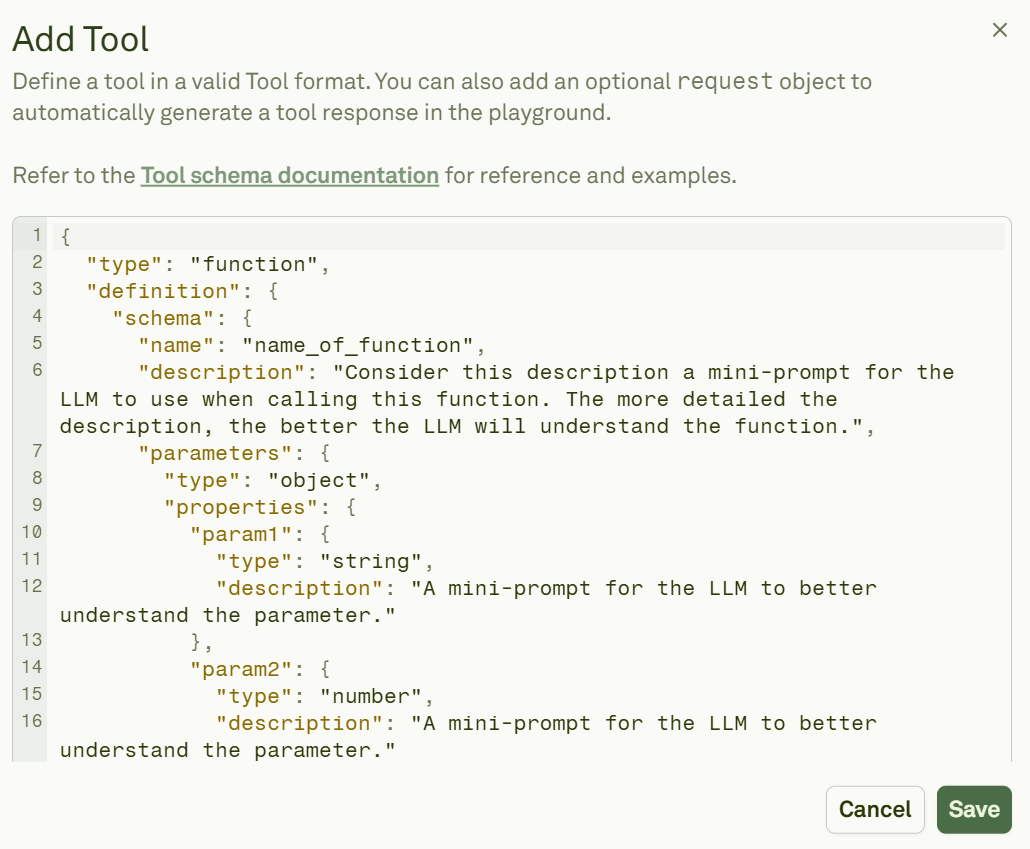

Tool schema definition

Each tool is defined using a JSON schema that tells the model what the tool does and what parameters it accepts. Click Add Tool to open the schema editor:

Schema reference

| Field | Type | Required | Description |

|---|---|---|---|

type | String | Yes | Always set to "function". |

name | String | Yes | A unique identifier for the tool. |

description | String | Yes | Describes what the tool does. Helps the model decide when to use it. |

parameters.type | String | No | The parameter structure type. Typically "object". |

parameters.properties | Object | No | Defines individual parameters with their types and descriptions. |

required | Array | No | Lists the parameter names that are mandatory. |

Refer to the OpenAI function calling documentation for more schema examples and best practices.

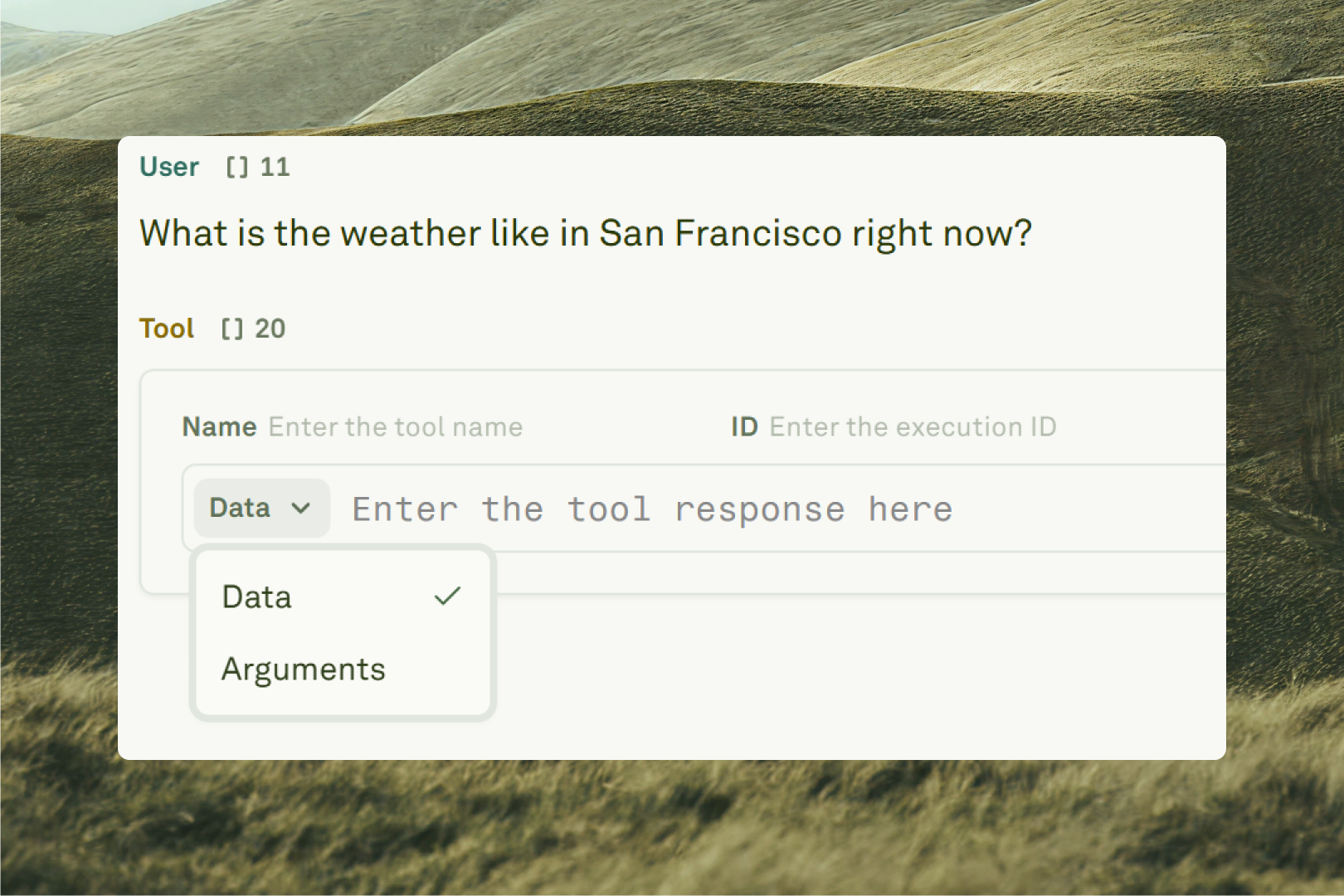

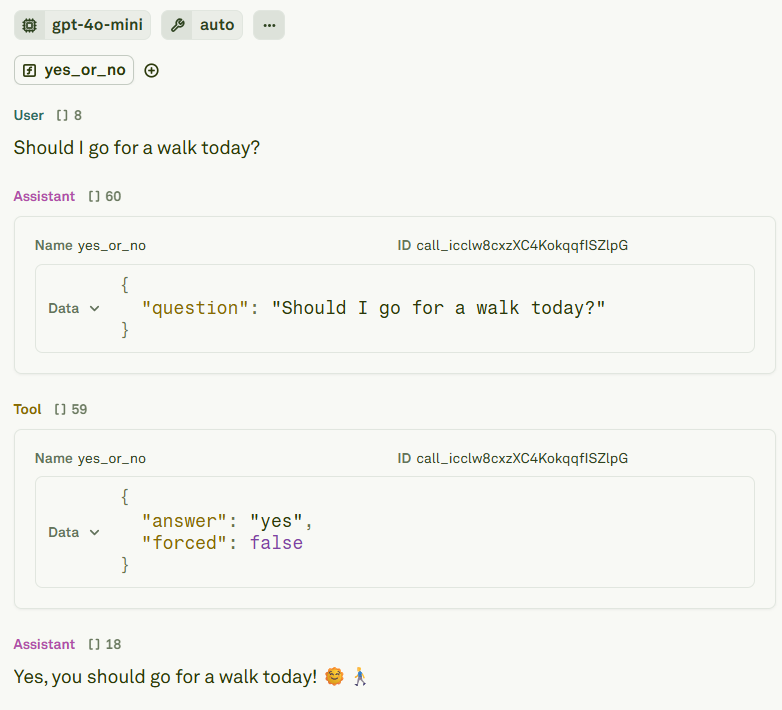

Add tool calls and responses to messages

Beyond defining tools, you can add tool call and tool response messages directly in the Editor. This is useful for building multi-shot prompts that demonstrate how the model should interact with tools.

Configure auto tool calls

For tools that connect to a live backend, you can configure an HTTP request endpoint so the Playground automatically executes the tool and continues the conversation. Add arequest object to your tool definition:

Request configuration reference

| Field | Type | Required | Description |

|---|---|---|---|

type | String | Yes | Always set to "http". |

method | String | Yes | The HTTP method (GET or POST). |

url | String | Yes | The endpoint URL to call. |

headers | Object | No | HTTP headers to include in the request. |

retry | Object | No | Retry configuration for failed requests. |

retry.maxAttempts | Number | No | Maximum number of retry attempts. |

retry.initialDelay | Number | No | Initial delay in milliseconds before the first retry. |

retry.exponentialFactor | Number | No | Multiplier for exponential backoff between retries. |

Next steps

Tool Calls in Playground

Test tool interactions in the Playground sandbox.

Use MCP Servers in Prompts

Connect to MCP servers for standardized tool access.