Spans represent individual operations within a trace — such as LLM calls, tool executions, embedding generation, and retrieval operations. Analyzing spans gives you granular visibility into each step of your AI agent’s workflow.Documentation Index

Fetch the complete documentation index at: https://www.adaline.ai/docs/llms.txt

Use this file to discover all available pages before exploring further.

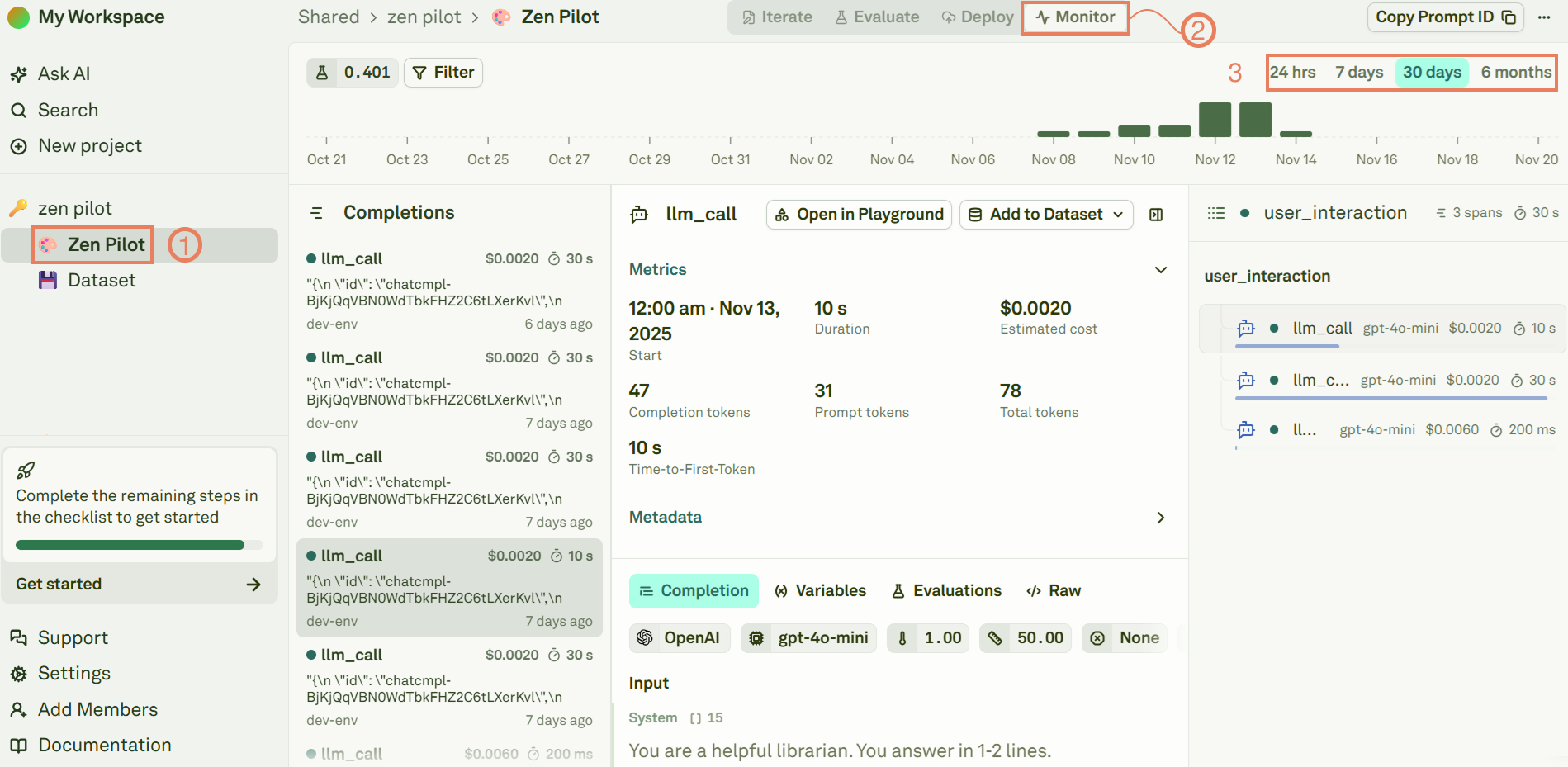

View spans for a prompt

Each prompt in Adaline can have spans associated with it. Navigate to the Monitor section of a specific prompt to see all spans logged against it:

Span types

Adaline recognizes several span types, each representing a different kind of operation:| Span type | Description | Key metrics |

|---|---|---|

| Model | LLM inference calls (chat completions, text generation) | Latency, input/output tokens, cost, model name |

| ModelStream | Streaming LLM responses | Latency, input/output tokens, cost, model name |

| Tool | Function and tool executions | Latency, input/output data |

| Embedding | Vector embedding generation | Latency, tokens, model name |

| Retrieval | RAG and vector database queries | Latency, query, results |

| Function | Custom application logic | Latency, input/output |

| Guardrail | Safety and compliance checks | Latency, pass/fail status |

| Other | Any custom operation type | Latency, input/output |

Inspect a span

Click on any span (from the trace view or the prompt spans view) to open its detail panel:| Section | What you see |

|---|---|

| Input | The complete request data sent to the operation (e.g., full prompt messages for LLM spans). |

| Output | The complete response data returned (e.g., model response content). |

| Metrics | Latency, token counts (input/output), and calculated cost. |

| Model info | Provider name and model used (for LLM and embedding spans). |

| Variables | Variable values associated with the span (set via SDK or proxy headers). |

| Tags | String labels for categorical filtering (e.g., rag-pipeline, premium-user). |

| Attributes | Custom key-value metadata (e.g., user_id, session_id, environment). |

| Errors | Error messages and stack traces if the operation failed. |

| Evaluation scores | Grade, numeric score, and reason from continuous evaluations, if enabled. |

Analyze LLM spans

Spans of type Model and ModelStream (LLM calls) provide the richest set of details:- Complete prompt messages — All system, user, and assistant messages sent to the model, exactly as they were at runtime.

- Full model response — The complete response content, including tool calls if applicable.

- Token breakdown — Separate counts for input tokens (prompt) and output tokens (response), so you can see where token budgets are being consumed.

- Cost calculation — Precise cost based on the model’s token pricing, computed from actual usage.

- Variable values — The resolved values of any

{{variables}}at the time of execution, useful for reproducing specific inputs. - Evaluation results — If continuous evaluations are enabled, the evaluation grade, score, and reason are shown alongside the span. This lets you immediately see whether the model’s output met your quality criteria.

Analyze tool and retrieval spans

For Tool and Retrieval spans, the detail panel shows:- Input — The function name, parameters, or query sent to the tool or vector database.

- Output — The returned results, retrieved documents, or API response.

- Duration — How long the external call took, helping you identify slow integrations.

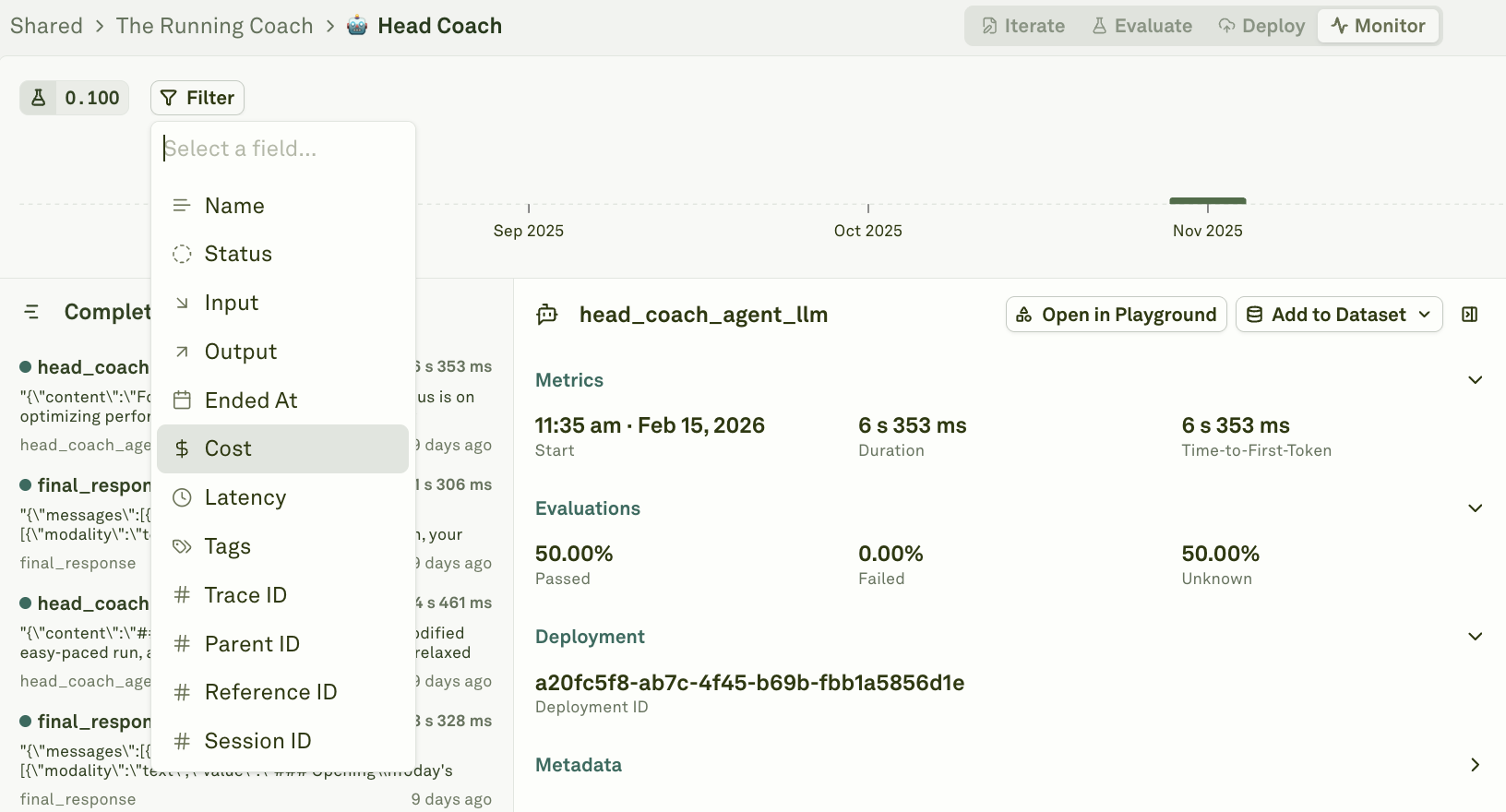

Filter spans

Use filters to narrow down spans across large volumes of data. You can filter by span type, status, duration, cost, tags, attributes, and prompt association — and combine multiple filters to isolate exactly the spans you need.

Export prompt bench spans as JSON

Use the Export JSON button in a prompt bench Monitor view to download the currently filtered model spans, or call the public API to export spans programmatically with cursor-based pagination. See Export Logs for the span export request fields, response shape, and a pagination loop example.Next steps

Analyze Log Charts

View aggregated metrics and trends over time.

Setup Continuous Evaluations

Automatically evaluate LLM spans on live data.

Use Logs to Improve Prompts

Debug issues by opening any span in the Playground.

Filter and Search Logs

Find specific spans with filters and metadata search.